Optimizing Informed Consent Comprehension Assessment: Strategies for Ethical and Effective Clinical Research

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for assessing and optimizing comprehension within the informed consent process.

Optimizing Informed Consent Comprehension Assessment: Strategies for Ethical and Effective Clinical Research

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for assessing and optimizing comprehension within the informed consent process. Drawing on the latest regulatory guidance and empirical research, we explore foundational challenges in comprehension, detail innovative methodological approaches for digital and co-created content, offer strategies for troubleshooting common pitfalls in risk communication and readability, and validate these techniques through comparative analysis of traditional versus modern consent models. The goal is to equip professionals with actionable insights to enhance participant understanding, meet ethical obligations, and improve the quality of clinical research.

Understanding the Comprehension Gap: Why Traditional Informed Consent Fails Participants

Technical Support Center: Troubleshooting Informed Consent Documents

This technical support center provides researchers, scientists, and drug development professionals with practical guides and solutions for a common critical flaw in clinical trials: informed consent documents that are too complex for participants to understand. Use the resources below to diagnose, troubleshoot, and resolve issues related to document complexity and participant comprehension.

Troubleshooting Guides

Guide 1: Diagnosing Readability and Comprehension Issues

Problem: Participants are not fully understanding the informed consent forms (ICFs), potentially compromising the ethical integrity of the trial and leading to poor recruitment or retention.

Investigation & Diagnosis: Follow this logical workflow to systematically identify the root cause of poor participant comprehension.

Diagnostic Steps:

Measure Document Length:

- Action: Calculate the total word count of your Patient Information Sheet (PIS) and Informed Consent Form (ICF).

- Acceptable Range: No universal standard, but use the shortest document necessary for complete information.

- Troubleshooting Tip: A median length of 5,139 words equates to a 21-minute reading time for an average reader; this is often excessive for an acutely ill patient [1].

Calculate Readability Scores: Use established metrics to quantitatively assess text difficulty. The table below summarizes key metrics and their implications.

Readability Metric Target Range Problematic Range (as found in COVID-19 trials) Interpretation Flesch-Kincaid Grade Level (FKGL) [1] 8th grade or lower [2] Median: 9.8 (9.1-10.8) [1] Corresponds to a 14-15 year old's reading level [1] Flesch Reading Ease (FRES) [1] 60-100 ("Standard" to "Easy") Median: 54.6 (47.0-58.3) [1] Scores below 60 are classified as "difficult" for comprehension [1] Gunning-Fog Index (GFOG) [1] 8 or lower Median: 11.8 (10.4-13.0) [1] Indicates complex sentence structure and word choice [1] Assess Language Complexity:

- Action: Analyze the writing style for common issues.

- Check: Sentence length (aim for 15-20 words), percentage of passive sentences (ideally <10%), and avoidance of unnecessary legal or technical jargon [1].

- Troubleshooting Tip: Long sentences and passive voice significantly reduce comprehension, especially for individuals with lower health literacy.

Guide 2: Resolving Complexity with Large Language Models (LLMs)

Problem: Manually rewriting complex ICFs to be simpler is time-consuming and may risk omitting critical information.

Solution: Implement a structured, LLM-assisted process to refine and improve consent documents. The following workflow outlines a proven methodology.

Resolution Steps:

- Input and Process: Feed the original clinical trial protocol and ICF into an LLM. The Mistral 8x22B model has been successfully used for this purpose, leveraging its large context window to handle lengthy documents [2].

- Refine for Understandability: Use a structured framework like the Readability, Understandability, and Actionability of Key Information (RUAKI) indicators to guide the LLM in reorganizing and clarifying content [2].

- Optimize for Readability: Specifically prompt the LLM to reduce the Flesch-Kincaid Grade Level to below the 8th grade, a common institutional requirement and best practice [2].

- Validate Output: A multidisciplinary team, including clinical researchers and health informaticians, must assess the LLM-generated ICF for completeness and accuracy before use. Studies show LLM-generated ICFs can achieve superior readability and understandability without sacrificing accuracy [2].

Frequently Asked Questions (FAQs)

Q1: What is the single most important improvement I can make to my informed consent form? A: Focus on reducing the Flesch-Kincaid Grade Level to 8th grade or lower. This directly addresses the gap between document complexity and the average reading ability of the population, a flaw identified as critical in recent research [1] [2].

Q2: Our ICFs are long because of legal and regulatory requirements. How can we shorten them? A: While total length is a challenge, the focus should be on the comprehensibility of the key information. The 2018 Common Rule mandates a "concise and focused presentation of key information" at the beginning of the ICF [2]. Use structured content methodologies to reuse approved boilerplate text and automate formatting, ensuring consistency and saving time without compromising legal requirements [3].

Q3: Are there proven technological solutions to this problem? A: Yes. Recent mixed-methods research demonstrates that Large Language Models (LLMs) can successfully generate ICFs with significantly improved readability, understandability, and actionability. One study showed LLM-generated ICFs achieved a Flesch-Kincaid grade level of 7.95 versus 8.38 for human-generated versions, and a 100% score in actionability [2].

Q4: Who is responsible for fixing this flaw in a clinical trial? A: Optimizing informed consent is a shared ethical commitment. Sponsors, investigators, and institutional review boards (IRBs) all have a role. Regulatory bodies like the FDA are also promoting a collaborative, global approach to improve the clarity and brevity of consent forms [4] [5].

Q5: How can I measure the "actionability" of an ICF? A: Actionability refers to how well the document enables a person to know what to do based on the information. This can be measured using tools like the RUAKI indicator, which contains specific items to assess whether the ICF clearly states the actions a participant needs to take [2].

The Scientist's Toolkit: Research Reagents & Materials

The following table details key methodological tools and frameworks for conducting research on informed consent comprehension.

| Tool / Material | Function / Explanation | Application in Comprehension Research |

|---|---|---|

| Flesch-Kincaid Grade Level | A validated software algorithm that calculates U.S. grade-level readability based on sentence length and syllables per word [1]. | Provides a quantitative, objective measure of text complexity to benchmark against population literacy levels [1]. |

| Readability, Understandability, and Actionability of Key Information (RUAKI) Indicator | An assessment framework comprising 18 binary-scored items that evaluate the accessibility, comprehensibility, and actionability of information [2]. | Serves as a structured evaluation tool for researchers to empirically test and improve the effectiveness of ICF key information sections [2]. |

| Large Language Model (e.g., Mistral 8x22B) | An artificial intelligence model trained on vast amounts of text data, capable of summarizing, paraphrasing, and simplifying complex language [2]. | Functions as an experimental intervention in research studies to automate and optimize the generation of simplified, participant-friendly consent forms [2]. |

| Prompt Engineering (Least-to-Most) | A technique for interacting with LLMs that breaks down complex tasks into a sequence of simpler, manageable prompts [2]. | A critical methodological step in research protocols to ensure LLMs produce accurate, complete, and appropriately-formatted ICF content [2]. |

FAQs: Implementing the Key Information Guidance

Q1: What is the core requirement of the FDA's 2024 draft guidance on informed consent? The draft guidance introduces two core requirements for informed consent forms (ICFs). First, consent must begin with a concise "Key Information" section designed to help prospective subjects understand the main reasons for or against participating. Second, the entire consent document must be presented in a manner that facilitates understanding [6] [7]. This aims to ensure that individuals can make a truly informed decision.

Q2: What specific content should be included in the "Key Information" section? The Key Information section should provide a focused overview of the most important details [8]. The FDA recommends including:

- The fact that the study involves research and its purpose.

- An explanation of why a person might or might not want to participate.

- The most common and serious risks and discomforts.

- The potential benefits to the subject or others.

- The anticipated duration of participation and a description of the key procedures [9] [8]. Not all elements of informed consent need to be in this section; you can cross-reference the full document for additional details [8].

Q3: What formatting and presentation strategies does the FDA recommend to improve comprehension? The guidance encourages the use of plain language and clear organizational tools. A sample approach endorsed by the FDA is a "bubble format" that uses rounded boxes to present discrete units of information, which research has shown can improve understanding [9] [7]. Other effective strategies include using bulleted lists to break down complex information, combining text with visual aids, and employing electronic consent processes where appropriate [7] [8].

Q4: Is this guidance binding, and what is its current status? As of its issuance in March 2024, this document is a draft guidance and contains non-binding recommendations [6]. It was issued to align with provisions in the revised Common Rule and a corresponding FDA proposed rule. The public comment period for this draft guidance was open until April 30, 2024 [9] [7].

Q5: How can I provide feedback on this draft guidance? You may submit comments at any time, even after the initial comment period. You can submit:

- Electronic comments via the Federal eRulemaking Portal at

www.regulations.gov(Docket FDA-2022-D-2997). - Written comments via mail to the FDA's Dockets Management staff [6] [9]. Your input will be considered before the agencies begin work on the final version of the guidance.

Troubleshooting Common Implementation Challenges

| Challenge | Symptom | Solution & Best Practices |

|---|---|---|

| Overlengthy Key Information | Key section becomes a dense "mini-consent," defeating its purpose. | Strictly summarize only the most critical "why/why not" points. Use cross-references to the main document for comprehensive details and avoid repeating all risk information [8]. |

| Poor Participant Comprehension | Low enrollment, high participant questions, or poor performance on comprehension assessments. | Adopt the "bubble format" or similar visual grouping for discrete information chunks. Use simple language (avoid technical jargon) and integrate visual aids or illustrations, especially for complex concepts or low-literacy populations [9] [7] [8]. |

| Inconsistent Application Across Studies | Wide variability in ICF structure and quality between different study sites or protocols. | Develop a standardized ICF template with a predefined Key Information section structure for your organization. Provide training for investigators and IRBs on the guidance's principles to ensure consistent interpretation and review [8]. |

| Difficulty Explaining Complex Trial Design | Participants struggle with concepts like biomarker-driven enrollment or crossover arms. | In the Key Information section, focus on the practical implications for the participant (e.g., "Your tumor will be tested for a specific marker to see if you are eligible"). Use a visual diagram or flowchart in the full ICF to explain the study design. |

Experimental Protocols for Assessing Comprehension

Protocol 1: Quantitative Assessment of Understanding

Objective: To quantitatively measure the effectiveness of a new ICF format in improving participant understanding. Methodology:

- Design: Randomized Controlled Trial. Participants are randomly assigned to receive either the standard ICF (Control Group) or the revised ICF with a Key Information section formatted per FDA guidance (Intervention Group).

- Instrument: Develop a validated questionnaire based directly on the core elements of the Key Information section (e.g., purpose, risks, benefits, voluntary nature).

- Procedure:

- After reviewing the ICF, participants complete the questionnaire.

- Scores are calculated based on correct answers.

- Analysis: Compare mean comprehension scores between the Control and Intervention groups using statistical tests (e.g., t-test) to determine if the new format leads to a significant improvement in understanding.

Protocol 2: Qualitative Feedback on Usability

Objective: To gather in-depth user feedback on the clarity, organization, and usability of the ICF. Methodology:

- Design: Qualitative study using semi-structured interviews or focus groups.

- Participants: Recruit a diverse group of potential participants representative of the target study population.

- Procedure:

- Participants are given the new ICF to review.

- A trained facilitator conducts an interview or focus group using a discussion guide with open-ended questions (e.g., "What were the main reasons you might not want to participate?", "Was there anything you found confusing?", "How did you find the layout of the first page?").

- Analysis: Record, transcribe, and perform thematic analysis on the interviews. Identify recurring themes related to comprehension barriers, points of clarity, and overall user experience with the document format.

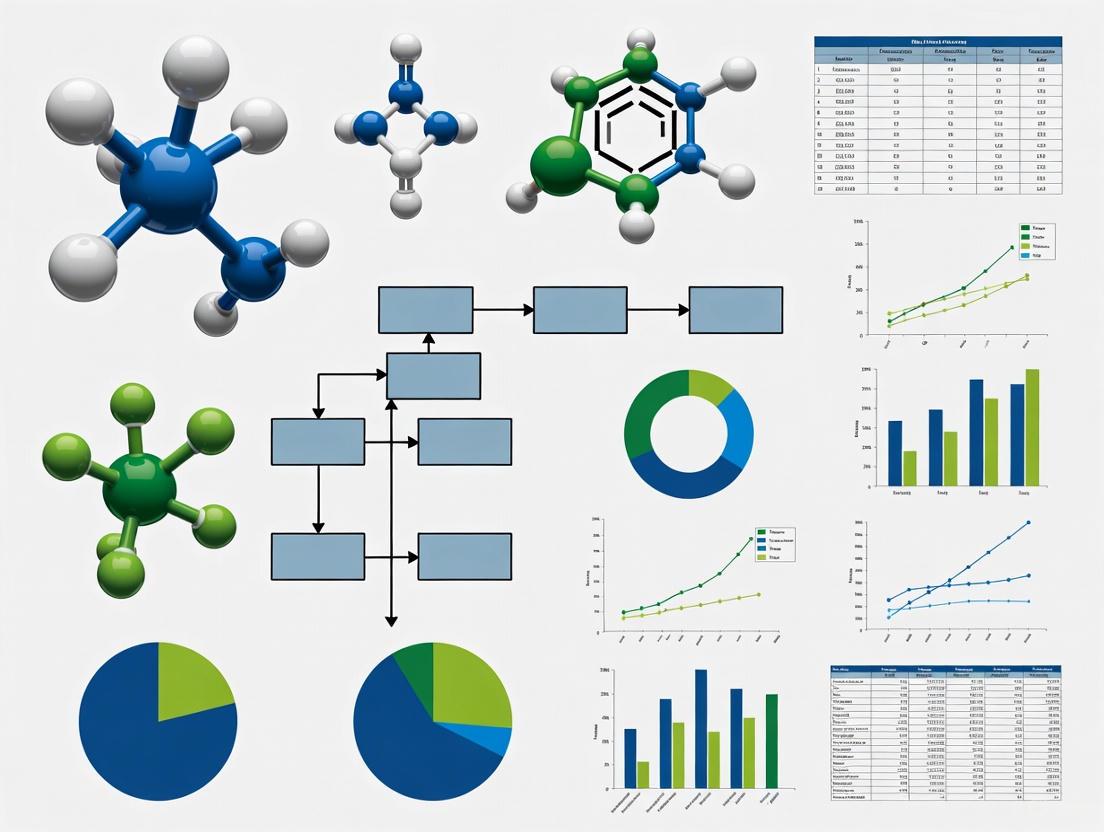

ICF Comprehension Assessment Workflow

Research Reagent Solutions for Compliance and Assessment

| Research 'Reagent' | Function in Optimizing Informed Consent |

|---|---|

| Plain Language Guidelines | Provides standardized rules for simplifying complex medical and technical jargon, serving as a foundational tool for drafting understandable consent forms. |

| Readability Assessment Tools (e.g., Flesch-Kincaid) | Quantifies the reading grade level of an ICF, allowing researchers to objectively measure and adjust text complexity to match the target population. |

| Validated Comprehension Questionnaires | Acts as a calibrated instrument to quantitatively measure participant understanding of core ICF elements, providing data for evidence-based ICF improvements. |

| Electronic Consent (eConsent) Platforms | Enables the integration of interactive elements (e.g., hyperlinks, embedded videos, knowledge checks) to present key information in a more engaging and accessible manner. |

| Visual Aid Libraries | A repository of pre-designed, culturally appropriate illustrations and icons that can be used to explain complex procedures or concepts within the Key Information section. |

Frequently Asked Questions

Q1: Why is the color contrast of text in my experimental workflow diagrams critical for research on informed consent? Poor color contrast can obscure critical information, reducing the comprehension of research protocols for participants. Adhering to WCAG 2.1 Level AAA standards ensures that visual aids are accessible to individuals with low vision or color deficiencies, which is a fundamental requirement for valid informed consent comprehension assessment [10] [11]. Diagrams with insufficient contrast can introduce bias into your research results.

Q2: How can I quickly check if the colors in my chart or diagram have sufficient contrast? You can use free online tools like the WebAIM Contrast Checker [12]. These tools allow you to input foreground and background color values (in HEX or RGB) and will immediately calculate the contrast ratio and indicate if it passes WCAG AA and AAA standards for both normal and large text [12].

Q3: What are the minimum contrast ratios I should aim for in my research materials? The required contrast ratio depends on the text size and the specific WCAG conformance level. For the enhanced (Level AAA) standard, which is recommended for critical research materials, the requirements are stricter [10] [11].

| Text Type | Size and Weight Definition | Minimum Contrast Ratio (Level AAA) |

|---|---|---|

| Large Text | 18 point (24px) or larger, or 14 point (18.66px) and bold [12] [11] | 4.5:1 [10] [11] |

| Normal Text | Anything smaller than large text [12] | 7:1 [10] [11] |

Q4: In Graphviz, how do I explicitly set a high-contrast text color for a node?

You must define both the fillcolor (background of the node) and the fontcolor (color of the text) for each node or subgraph in your DOT script. Relying on default settings often leads to poor contrast. The following example demonstrates the correct syntax:

Troubleshooting Guides

Issue: Low comprehension scores for data frequency information in consent forms.

Problem: Participants incorrectly estimate the likelihood of research risks and benefits when frequency data is presented only in dense textual formats [10].

Solution: Supplement text with well-designed visual aids.

- Protocol: Integrate simplified icon arrays or bar charts directly into consent documents to represent frequencies (e.g., 1 in 100). This converts abstract numbers into concrete visual proportions.

- Validation: During pilot testing, use a two-group design. The control group receives the text-only version, while the experimental group receives the version with visual aids. Compare comprehension scores on frequency-related questions using a standardized assessment tool.

Issue: Generated diagrams (e.g., from Graphviz) have poor text readability.

Problem: The text inside nodes is difficult to read because the color does not sufficiently contrast with the node's background. This often happens when colors are chosen manually without verification [10].

Solution: Systematically apply and verify color choices in your diagramming tools.

- Protocol for Graphviz in R (DiagrammeR): Use the

node_aes()function to explicitly set thefontcolorbased on thefillcolor[13]. - Protocol for Automated Color Selection: For dynamic environments, implement an algorithm to choose white or black text based on the background color's perceived brightness. The W3C-recommended formula calculates perceived brightness [14]:

- Verification Step: Always run your final diagram through a contrast checker, treating each node as a "large text" element due to its typical size [12].

Research Reagent Solutions

Essential digital tools and their functions for creating accessible visual communication materials.

| Tool or Resource Name | Function in Research |

|---|---|

| WebAIM Contrast Checker [12] | Validates the contrast ratio between foreground and background colors to ensure compliance with WCAG guidelines. |

| Graphviz (DOT language) | Generates consistent, reproducible diagrams for illustrating experimental workflows and conceptual models. |

color-contrast-checker (npm package) [15] |

Allows programmatic validation of color contrast within automated data visualization pipelines. |

prismatic::best_contrast() (R function) [16] |

Automatically selects the best contrasting text color (e.g., white or black) for a given background color in R graphics. |

| W3C Contrast (Enhanced) Rule [10] | Provides the formal technical standard and testing framework for achieving 7:1 (normal text) and 4.5:1 (large text) contrast ratios. |

Experimental Protocol: Assessing the Impact of Visual Aids on Comprehension

1. Objective: To quantitatively evaluate whether supplementing text-based risk frequency information with icon arrays improves comprehension accuracy in informed consent forms.

2. Methodology:

- Design: A randomized controlled trial with two parallel groups.

- Participants: Recruited from a relevant participant pool (e.g., patient groups, general public), matched for demographics and baseline numeracy.

- Intervention:

- Control Group: Receives a traditional, text-only consent form describing the frequency of potential side effects.

- Intervention Group: Receives the same consent form where key frequencies are additionally visualized using icon arrays (e.g., 10 filled icons out of 100 total to represent 10%).

- Assessment: Immediately after reviewing the form, all participants complete a standardized questionnaire designed to assess their comprehension of the risk frequencies, overall procedure, and potential benefits.

3. Data Analysis:

- Compare mean comprehension scores between the two groups using an independent samples t-test.

- Conduct a subgroup analysis based on participant numeracy levels to determine if the effect of the visual aid is moderated by this factor.

Participant Comprehension Workflow

The diagram below visualizes the experimental protocol for assessing informed consent comprehension, from participant recruitment to data analysis. The node colors and text are formatted to ensure high accessibility.

Logical Pathway for Accessible Diagram Creation

This flowchart outlines the decision-making process for ensuring text is readable against its background in scientific diagrams, a critical step for creating accessible visual aids.

Implementing Effective Assessment and Enhancement Strategies

For researchers aiming to optimize the assessment of informed consent comprehension, the Quality of Informed Consent (QuIC) and the Decision-Making Control Instrument (DMCI) serve as essential, validated tools. These instruments allow for the standardized and empirical evaluation of two core components of a valid consent process: the participant's understanding of the information presented (QuIC) and the voluntariness of their decision (DMCI).

The QuIC is a reliable questionnaire that measures both the actual (objective) and perceived (subjective) understanding that research subjects have of the clinical trial they are joining [17]. It was developed to incorporate the basic elements of informed consent specified in federal regulations and can be adapted for different study populations [18] [17].

The DMCI was developed to fill a gap in the empirical assessment of the voluntariness of consent. It measures a participant's perception of control over the decision-making process, assessing three dimensions: Self-Control, Absence of Control, and Others’ Control [19] [20]. Using these tools in tandem provides a more holistic assessment of the informed consent process, moving beyond theoretical assumptions to data-driven insights.

Instrument Specifications and Comparison

The table below summarizes the core characteristics of the QuIC and DMCI to help researchers select the appropriate tool.

Table 1: Key Characteristics of the QuIC and DMCI

| Feature | Quality of Informed Consent (QuIC) | Decision-Making Control Instrument (DMCI) |

|---|---|---|

| Primary Construct Measured | Understanding and Comprehension | Voluntariness and Perceived Control |

| Key Domains | Objective understanding (factual knowledge), Subjective understanding (perceived knowledge) [21] [17]. | Self-Control, Absence of Control, Others' Control [19] [20]. |

| Typical Format | Two-part questionnaire (Part A: objective knowledge; Part B: perceived understanding) [18] [21]. | 9-item questionnaire with three subscales [20]. |

| Scoring & Interpretation | Higher scores indicate better understanding [21]. | Higher total scores (max 30) indicate greater perceived autonomy [21]. |

| Validation Populations | Cancer clinical trial patients [17], adapted for minors, pregnant women, and adults in vaccine trials [18]. | Parents making decisions for seriously ill children [19] [20]. |

| Administration Time | Brief; average of ~7 minutes [17]. | Not explicitly stated, but designed for use soon after decision is made [19]. |

Experimental Protocols & Key Findings

Protocol: Evaluating Telehealth vs. In-Person Informed Consent

A 2025 randomized controlled trial provides a robust methodology for using the QuIC and DMCI to compare two consent delivery modalities [22] [21].

- Objective: To evaluate if teleconsent (via Doxy.me) achieves equivalent participant comprehension and decision-making control compared to traditional in-person consent [21].

- Study Design: Randomized comparative study with 64 participants assigned to either teleconsent or in-person groups [22] [21].

- Participant Recruitment: Identified through an institutional online platform; eligibility confirmed via survey [21].

- Consent Process: The teleconsent group reviewed and e-signed forms via Doxy.me with real-time researcher interaction. The in-person group met in a private office [21].

- Data Collection:

- Key Findings:

- No significant difference in QuIC Part A (objective understanding), QuIC Part B (subjective understanding), or DMCI (voluntariness) scores between teleconsent and in-person groups [22] [21].

- This demonstrates that teleconsent is a viable alternative, offering equivalent understanding and voluntariness while overcoming geographic barriers [22].

The following workflow diagram illustrates the experimental design of this comparative study:

Protocol: Assessing Digital Informed Consent in Multinational Trials

A 2025 cross-sectional study evaluated digitally implemented informed consent (eIC) based on i-CONSENT guidelines, showcasing the QuIC's adaptability [18].

- Objective: To assess comprehension and satisfaction with eIC materials tailored for minors, pregnant women, and adults across Spain, the UK, and Romania [18].

- Materials Development: eIC materials were co-created with target populations via design thinking sessions and surveys. Formats included layered web content, narrative videos, and infographics [18].

- Comprehension Assessment: Used adapted versions of the QuIC for each population. Objective comprehension (QuIC part A) was categorized as low (<70%), moderate (70-80%), adequate (80-90%), or high (≥90%) [18].

- Key Findings:

- High Comprehension: Mean objective QuIC scores were 83.3 (minors), 82.2 (pregnant women), and 84.8 (adults), all falling in the "adequate" to "high" range [18].

- Format Preferences: Minors and pregnant women preferred videos, while adults favored text, highlighting the need for multimodal consent materials [18].

- Satisfaction: Over 97% of participants in all groups reported high satisfaction with the eIC materials [18].

Table 2: Comprehension Scores and Preferences from the Multinational eIC Study

| Population Group | Sample Size (n) | Mean Objective QuIC Score (SD) | Comprehension Category | Preferred Format |

|---|---|---|---|---|

| Minors | 620 | 83.3 (13.5) | Adequate | Video (61.6%) |

| Pregnant Women | 312 | 82.2 (11.0) | Adequate | Video (48.7%) |

| Adults | 825 | 84.8 (10.8) | High | Text (54.8%) |

Troubleshooting Guides & FAQs

FAQ 1: How Do I Adapt the QuIC for My Specific Study Population?

- Challenge: The core QuIC was validated in cancer trials [17], but your research may involve different populations (e.g., minors, non-English speakers).

- Solution:

- Follow a Participatory Co-creation Process: As demonstrated in the multinational trial, conduct design thinking sessions with representatives from your target population to review and adapt the QuIC items for clarity and relevance [18].

- Ensure Linguistic and Cultural Validation: For multinational studies, use professional translation followed by independent review to ensure conceptual equivalence, not just literal translation [18].

- Pilot the Adapted Tool: Always pilot the revised QuIC with a small sample from the target group to identify any remaining issues with comprehension or item wording [18].

FAQ 2: What Factors Can Influence DMCI or QuIC Scores and How Can We Control For Them?

- Challenge: Scores on your assessment tools may be confounded by external variables, making it difficult to isolate the effect of your consent intervention.

- Solution:

- Measure and Control for Health Literacy: Health literacy, measured by tools like the SAHL-E, can significantly impact comprehension scores. The telehealth study found a statistically significant difference in health literacy between groups, underscoring the need to account for it in analysis [21].

- Consider Educational Attainment: A study in Italian cancer patients found that a higher level of education was associated with increased understanding of the informed consent [23]. Collect education data as a potential covariate.

- Be Cautious with "Experienced" Participants: The multinational eIC study found that prior trial participation was unexpectedly associated with lower comprehension scores, suggesting overconfidence or disengagement. Consider providing tailored information for returning participants [18].

FAQ 3: How Can We Effectively Implement Teleconsent Without Compromising Validity?

- Challenge: Concerns about verifying identity, ensuring engagement, and technological barriers during remote consent.

- Solution:

- Use a Secure, Interactive Platform: Employ a HIPAA-compliant platform like Doxy.me that allows for real-time screen sharing of the consent document and live interaction between researcher and participant [21].

- Verify Identity Proactively: During teleconsent, ask participants to enable their cameras for the entire session. Use platform features to take a timestamped screenshot during the e-signing process to create an audit trail [21].

- Leverage Built-in Features: Use the platform's annotation tools to collaboratively review the form, highlight key sections, and ensure the participant follows along [24].

Research Reagent Solutions

The table below lists the key "research reagents" — the essential tools and materials — required to implement the methodologies described in this guide effectively.

Table 3: Essential Research Reagents and Materials for Informed Consent Assessment

| Item Name | Specifications / Recommended Type | Primary Function in Research |

|---|---|---|

| Validated QuIC Questionnaire | Original (20 objective, 14 subjective items) [17] or culturally/population-adapted versions [18]. | Measures objective and subjective understanding of the informed consent. |

| Validated DMCI Questionnaire | 9-item form measuring Self-Control, Absence of Control, and Others' Control [20]. | Assesses the perceived voluntariness of the consent decision. |

| Health Literacy Assessment Tool | Short Assessment of Health Literacy-English (SAHL-E) [21] or equivalent in target language. | Controls for a key confounding variable that impacts comprehension scores. |

| Teleconsent Platform | HIPAA-compliant video conferencing software with screen sharing and e-signature capabilities (e.g., Doxy.me) [21] [24]. | Facilitates remote consent administration while maintaining interaction and documentation. |

| Digital Consent (eIC) Materials | Multi-format materials: Layered web pages, narrative videos, infographics [18]. | Enhances participant engagement and comprehension by catering to diverse preferences. |

The following diagram outlines the logical relationship and workflow for integrating these tools into a comprehensive consent assessment strategy:

Troubleshooting eConsent: Frequently Asked Questions

This guide provides solutions to common technical and user-experience issues encountered when implementing or using electronic informed consent (eConsent) platforms in a research setting.

Registration and Login

Q: The participant did not receive the expected eConsent email. What should I do? A: First, check the eConsent status in your system. If the status is "Delivered," verify the participant's email address in their record is correct. If it is incorrect, correct the address, cancel the original eConsent form, and resend it. If the email is correct, ask the participant to check their spam or trash folders. If the status is not "Delivered" after an hour, there may be a system error, and you should contact your platform's technical support [25].

Q: A participant has lost their eConsent email or deleted it before completing the form. How can they proceed? A: Ask the participant to check their spam or trash folders. If they have not yet registered an account, you can cancel the original eConsent form and send a new one. If they have already registered but not signed, they can log in directly to the patient portal, where their pending tasks will be displayed [25].

Q: A participant is having trouble logging in because their credentials are not recognized. A: Confirm the participant is using the same email address that is on file with your site. Verify that the Caps Lock key is not on and assist them with the password reset function if needed. Also, ensure the participant is trying to log in to the correct regional website for their account (e.g., the EU site vs. the US site) [25].

Reviewing and Signing

Q: A participant reports the text is too small to read comfortably.

A: In the web browser, participants can use the zoom function (often Ctrl + on Windows or Cmd + on Mac) to enlarge the text. Furthermore, ensure your eConsent platform supports accessibility features like screen readers and keyboard navigation [25].

Q: Why is a consent form grayed out or unavailable for a participant to review? A: This typically occurs when forms are required to be completed in a specific signing order. The participant must first complete the consent forms that are higher on the task list before they can access subsequent ones [25].

Q: A participant cannot sign the form because the system states they haven't read all sections. A: Guide the participant to the table of contents, which should indicate which sections are incomplete (often marked with an orange highlight or lack a green checkmark). The participant needs to view each section and answer all required questions before the signature field becomes active [25].

Experimental Protocols and Research Data

The following data and methodologies are derived from recent multinational studies evaluating the effectiveness of eConsent materials designed according to the i-CONSENT guidelines.

Participant Comprehension and Satisfaction

A 2025 cross-sectional study evaluated the comprehension and satisfaction of 1,757 participants across Spain, the UK, and Romania using tailored eConsent materials. Comprehension was assessed using an adapted Quality of the Informed Consent (QuIC) questionnaire, with objective comprehension scores categorized as low (<70%), moderate (70%-80%), adequate (80%-90%), or high (≥90%). Satisfaction was measured via Likert scales, with scores ≥80% deemed acceptable [26] [18] [27].

Table 1: Objective Comprehension Scores by Participant Group

| Participant Group | Sample Size (n) | Mean Comprehension Score (SD) | Comprehension Category |

|---|---|---|---|

| Minors | 620 | 83.3 (13.5) | Adequate |

| Pregnant Women | 312 | 82.2 (11.0) | Adequate |

| Adults | 825 | 84.8 (10.8) | Adequate |

Source: Fons-Martinez et al., 2025 [26] [18] [27]

Table 2: Participant Satisfaction with eConsent Materials

| Participant Group | Satisfaction Rate | Key Feedback |

|---|---|---|

| Minors | 604/620 (97.4%) | - |

| Pregnant Women | 303/312 (97.1%) | - |

| Adults | 804/825 (97.5%) | 777/825 (94.2%) reported materials facilitated understanding |

Source: Fons-Martinez et al., 2025 [26] [18] [27]

Methodology for eConsent Implementation and Assessment

Protocol 1: Co-creation of eConsent Materials The following methodology was used to develop the eConsent materials evaluated in the 2025 study [26] [18]:

- Multidisciplinary Team Assembly: Form a team comprising clinical trial physicians, epidemiologists, a sociologist, a journalist, and a nurse.

- Participatory Design Sessions: Conduct design thinking sessions with representatives from the target populations (e.g., minors, pregnant women) to gather input on content, format, and usability.

- Material Development: Create the eConsent materials based on participant feedback. Formats should include:

- Layered web content for detailed, on-demand information.

- Narrative videos (e.g., storytelling for minors, Q&A for pregnant women).

- Printable, improved-format documents.

- Customized infographics for complex topics (risks, procedures, data protection).

- Cross-Cultural Translation and Adaptation: Professionally translate materials into target languages, ensuring contextual appropriateness and adaptation to local customs. Each translation should be independently reviewed.

Protocol 2: Assessing Comprehension and Satisfaction This protocol outlines the assessment phase used in the referenced study [26] [18]:

- Platform Setup: Provide participants access to the eConsent materials via a digital platform where they can choose from or combine the available formats.

- Comprehension Assessment: Administer a tailored version of the QuIC questionnaire, which consists of:

- Part A: Measures objective understanding through multiple-choice or true/false questions.

- Part B: Measures subjective understanding using a 5-point Likert scale.

- Satisfaction and Usability Assessment: Use Likert scales and direct usability questions to evaluate participant satisfaction and perceived ease of use.

- Data Analysis: Use multivariable regression models to identify demographic and experiential predictors of comprehension (e.g., gender, age, prior trial experience, education level).

Table 3: Preferred eConsent Format by Participant Group

| Participant Group | Preferred Format | Proportion Preferring Format |

|---|---|---|

| Minors | Video | 382/620 (61.6%) |

| Pregnant Women | Video | 152/312 (48.7%) |

| Adults | Text | 452/825 (54.8%) |

Source: Fons-Martinez et al., 2025 [26] [18] [27]

Key findings from recent research include:

- Demographic Predictors: Women and girls consistently outperformed men and boys in comprehension scores. Generation X adults scored higher than millennials. Prior participation in a clinical trial was unexpectedly associated with lower comprehension scores, suggesting a need for tailored engagement for returning participants [26] [27].

- Cultural Adaptation: While translated materials maintained high efficacy, comprehension was lower in some regions among participants with lower educational levels, highlighting that cultural adaptation is as critical as linguistic translation [26] [18].

- User Concerns: A separate 2025 study in China found that while 68% of participants preferred eConsent, major concerns included data security and confidentiality (64.4%), operational complexity (52.3%), and the effectiveness of online interaction (59.3%) [28].

Workflow and System Diagrams

The following diagram illustrates the logical workflow for a multimodal eConsent system designed to optimize participant comprehension.

Multimodal eConsent Comprehension Workflow

The Researcher's Toolkit: Essential eConsent Components

Table 4: Key Research Reagents and Solutions for eConsent Implementation

| Item | Function in eConsent Research |

|---|---|

| Digital Platform with Multimodal Capabilities | A secure system that hosts and delivers eConsent materials in various formats (web, video, infographics, documents) and manages the consenting workflow [26] [29]. |

| Quality of Informed Consent (QuIC) Questionnaire | A validated instrument adapted to assess objective and subjective comprehension of the consent information among participants [26] [18] [27]. |

| Co-creation Framework (e.g., Design Thinking) | A participatory methodology for involving target populations (minors, pregnant women, etc.) in the design of eConsent materials to ensure relevance and clarity [26] [18]. |

| Professional Translation & Cultural Adaptation Protocol | A rigorous process for translating and culturally adapting consent materials, ensuring they are contextually appropriate for multinational trials [26] [18]. |

| Comprehension Check Modules | Integrated interactive quizzes or questions within the eConsent platform to verify participant understanding before signing [29] [30]. |

Informed consent (IC) is a cornerstone of ethical clinical research, yet comprehensive studies consistently reveal significant gaps in participant comprehension [18]. The i-CONSENT project addresses these challenges by improving IC materials to make them more comprehensible, accessible, and tailored to the specific needs of diverse populations [18]. This approach recognizes that effective IC must meet five key criteria: voluntariness, capacity, disclosure, understanding, and decision-making [18]. Co-creation represents a paradigm shift in developing these materials, moving from a top-down, researcher-driven process to a collaborative approach that actively involves target populations as partners in material development [31]. This methodology acknowledges the value of participant voices and experiences, ultimately leading to more effective comprehension outcomes.

Core Principles of Co-Creation in Material Development

Defining Co-Creation in Educational and Research Contexts

Co-creation is a collaborative approach that considers the interests and voices of all stakeholders [32]. In education and research contexts, this means not only contextualizing but also creating partnerships to serve the learners and their communities [32]. When applied to informed consent material development, co-creation involves inviting target populations to participate in constructing knowledge or designing materials, activities, and assessments [31]. This process acknowledges that participants possess valuable insights about their own cognitive needs, literacy levels, and communication preferences that researchers may lack.

Co-creation concepts describe the development of learning material by employees, for employees in organizational settings [33], and this same principle applies to research contexts where materials are developed by participants, for participants. The approach enables the design of situated, adapted learning materials that are easier for target audiences to understand and are just-in-time available, thereby counteracting cognitive overload [33].

Theoretical Foundations and Benefits

The theoretical foundation of co-creation draws from situated learning theory, which emphasizes that learning is most effective when embedded in the context in which it will be applied [33]. This is particularly relevant for informed consent processes, where participants must understand complex information well enough to make meaningful decisions about their participation. Co-creation offers numerous demonstrated benefits:

- Improved disciplinary learning for participants and learning about teaching for researchers [31]

- Deeper engagement both as learners and as teachers [31]

- More confidence as learners or teachers [31]

- Shift toward shared responsibility for learning and teaching [31]

- Stronger sense of belonging to a learning community [31]

- Enhanced curricular materials and teaching approaches [31]

Additionally, the creation of content has positive effects on the diverse participants involved in the co-creation process, including increasing autonomy, self-regulation, and responsibility; improving performance; and enhancing critical reflection and communication skills [33].

Methodological Framework for Co-Creation

Levels of Participant Involvement

The degree of participant involvement in co-creation can vary significantly across a spectrum from consultation to full partnership. Bovill et al. (2017) present different levels of student involvement in the curriculum that can be adapted for research contexts [31]:

Table: Levels of Participation in Co-Creation

| Participation Level | Description | Application in IC Research |

|---|---|---|

| Dictated Curriculum | Participants have no control or input into the material design | Traditional researcher-developed consent forms |

| Pedagogical Consultation | Researcher incorporates participants' ideas and feedback with a certain group | Focus groups providing feedback on draft materials |

| Partnership Classroom | All participants contribute ideas and feedback throughout the process | Iterative design with entire participant cohorts |

| Curriculum Co-design | Working with participants to redesign materials or co-design new ones | Participant representatives join material development teams |

| Knowledge Co-creation | Engaging participants in research activities that contribute to new knowledge | Participants as co-researchers in developing and testing IC frameworks |

Implementing Co-Creation: Practical Approaches

Successful implementation of co-creation in informed consent material development involves several practical approaches drawn from validated methodologies:

Design Thinking Sessions: The i-CONSENT project conducted design thinking sessions with children and parents, as well as sessions with children alone, to develop appropriate materials for minors [18]. Similarly, they held two design thinking sessions with pregnant women to develop tailored materials for this population [18].

Multidisciplinary Collaboration: A multidisciplinary team comprising clinical trial physicians, epidemiologists, a sociologist, a journalist, and a nurse collaborated on the design of materials, ensuring they were scientifically accurate while addressing the cognitive and cultural needs of participants [18].

Iterative Piloting: The development process includes piloting the contents of information sheets and surveys with target populations to refine materials before final implementation [18].

Layered Information Approaches: Implementing modular information designs that allow participants to access additional details or definitions by clicking on specific terms, accommodating varying levels of information needs [18].

The workflow for implementing co-creation in material development follows a systematic process:

Experimental Evidence and Outcomes

Comprehension Assessment Results

The effectiveness of co-created materials has been rigorously evaluated in multiple studies. A cross-sectional study conducted with 1,757 participants across Spain, the United Kingdom, and Romania demonstrated significant success [18]. The study involved 620 minors, 312 pregnant women, and 825 adults who reviewed electronically delivered informed consent (eIC) materials developed through co-creation methodologies [18].

Table: Comprehension Scores by Population Group

| Participant Group | Sample Size | Mean Comprehension Score | Standard Deviation | Adequate Comprehension (80-90%) |

|---|---|---|---|---|

| Minors | 620 | 83.3% | 13.5 | Yes |

| Pregnant Women | 312 | 82.2% | 11.0 | Yes |

| Adults | 825 | 84.8% | 10.8 | Yes |

These results demonstrate that co-created materials consistently achieved adequate comprehension levels (above 80%) across all demographic groups [18]. The study also revealed important demographic variations in comprehension. Women and girls outperformed men and boys (β=+.16 to +.36), and Generation X adults scored higher than millennials (β=+.26, P<.001) [18]. Interestingly, prior trial participation was associated with lower comprehension scores (β=−.47 to −1.77), suggesting that overconfidence from previous experience might negatively impact engagement with new consent materials [18].

Co-creation methodologies also revealed significant differences in format preferences across population groups, highlighting the importance of offering multiple modalities:

Table: Format Preferences by Participant Group

| Participant Group | Video Preference | Text Preference | Other Formats | Satisfaction Rate |

|---|---|---|---|---|

| Minors (n=620) | 61.6% (382) | 22.4% (139) | 16.0% (99) | 97.4% (604) |

| Pregnant Women (n=312) | 48.7% (152) | 34.9% (109) | 16.4% (51) | 97.1% (303) |

| Adults (n=825) | 28.2% (233) | 54.8% (452) | 17.0% (140) | 97.5% (804) |

These findings demonstrate that co-created materials achieved remarkably high satisfaction rates (exceeding 90%) across all groups [18]. The variation in format preferences underscores the importance of tailoring delivery methods to specific populations rather than taking a one-size-fits-all approach.

Technical Support: Troubleshooting Common Co-Creation Challenges

Frequently Asked Questions

Q: What are the most significant challenges in implementing co-creation for material development? A: The primary challenges include: (1) Power dynamics - co-creation "requires the teacher to relinquish some inherent power and, similarly, requires students to take responsibility in their empowered status as partners in the classroom" [31]; (2) Time management - the process requires additional time to acclimate participants to the process and expectations [31]; and (3) Cognitive load - participants may experience increased cognitive demands during the creation process [33].

Q: How can researchers address power imbalances in co-creation processes? A: Successful approaches include building trust through transparent communication, establishing clear guidelines for the co-creation process, acknowledging the value of participant expertise, and creating structured opportunities for meaningful input rather than token consultation [31].

Q: What methodological considerations are crucial for cross-cultural implementation of co-created materials? A: The i-CONSENT project demonstrated that while translated materials maintained high efficacy across countries, comprehension scores in Romania were lower among participants with lower educational levels (β=−1.05, P=.001) [18]. This highlights the need for cultural adaptation beyond mere translation, considering local customs, linguistic conventions, and educational contexts.

Q: How can researchers manage the increased cognitive load associated with co-creation? A: Strategies include breaking complex tasks into manageable steps, providing clear templates and guidelines, offering adequate technical support, and distributing development activities across multiple sessions to prevent participant fatigue [33].

Troubleshooting Workflow for Co-Creation Implementation

The following diagram outlines a systematic approach to addressing common challenges in co-creation implementation:

Research Reagent Solutions: Essential Tools for Co-Creation

Successful implementation of co-creation methodologies requires specific tools and approaches that function as "research reagents" in this context. The following table details essential components for effective co-creation in informed consent material development:

Table: Essential Research Reagents for Co-Creation Implementation

| Tool Category | Specific Implementation | Function | Example Applications |

|---|---|---|---|

| Participatory Design Frameworks | Design Thinking Sessions | Structured approach to collaborative problem-solving that emphasizes empathy and iteration | Sessions with minors and parents to develop age-appropriate consent materials [18] |

| Multimodal Content Delivery Systems | Layered Web Content | Modular information architecture allowing users to access details at their preferred depth | Website allowing participants to click terms for definitions [18] |

| Narrative Development Tools | Tailored Video Formats | Storytelling approaches designed for specific demographic groups | Question-and-answer style videos for pregnant women; narrative storytelling for minors [18] |

| Assessment Instruments | Adapted Quality of Informed Consent Questionnaire (QuIC) | Validated tools modified for specific populations to measure comprehension outcomes | Tailored adaptations for minors, pregnant women, and adults with appropriate reading levels [18] |

| Cross-Cultural Adaptation Protocols | Professional Translation Rubrics | Guidelines ensuring fidelity to meaning while adapting to local customs and linguistic conventions | Translation process prioritizing contextual appropriateness for multinational trials [18] |

The co-creation model represents a significant advancement in the development of informed consent materials that genuinely promote participant comprehension. By actively involving target populations in the design process, researchers can create materials that are more accessible, engaging, and effective across diverse demographic groups. The experimental evidence demonstrates that co-created materials consistently achieve adequate comprehension levels (above 80%) and high satisfaction rates (exceeding 90%) across populations including minors, pregnant women, and adults [18]. Future research should continue to explore regional disparities, evaluate interventions for overconfident returning participants, and validate these tools across broader cultural contexts to further optimize informed consent processes in clinical research.

Q1: What are the key format preferences for minors in informed consent materials? Research indicates that minors (ages 12-13) show a strong preference for video content. In a multinational study, 61.6% of minors preferred videos presented in a narrative storytelling format, which significantly outperformed text-based materials for this demographic [26] [18].

Q2: How do content preferences of pregnant women differ from other adult populations? Pregnant women participating in clinical trials demonstrated divided preferences between videos (48.7%) and other digital formats. They responded particularly well to question-and-answer style videos and infographics explaining study procedures, suggesting a need for both visual engagement and specific informational clarity [26].

Q3: What content formats do adults prefer for complex clinical trial information? Unlike younger demographics, most adults (54.8%) prefer traditional text-based materials, though enhanced with layered web content and supporting infographics. Generation X adults consistently outperformed millennials in comprehension scores when using these text-dominant formats [26] [18].

Q4: How effective are co-creation methods in developing tailored content? Participatory design methods significantly improve comprehension across all demographics. Design thinking sessions with minors and pregnant women, plus surveys with adults, resulted in comprehension scores exceeding 80% across all groups, with satisfaction rates over 97% [26].

Q5: Do these preferences translate across different cultural contexts? While core preferences remain consistent, cultural adaptation is crucial. Materials co-created in Spain maintained high efficacy when translated to English and Romanian, though comprehension scores in Romania were lower among participants with lower educational levels, indicating need for localized adjustment [26].

Experimental Protocols & Data

Table 1: Comprehension Scores by Demographic and Format Preference

| Demographic | Sample Size | Mean Comprehension Score (%) | Preferred Format | Percentage Preferring Format |

|---|---|---|---|---|

| Minors (12-13 years) | 620 | 83.3 (SD 13.5) | Narrative Video | 61.6% |

| Pregnant Women | 312 | 82.2 (SD 11.0) | Q&A Video | 48.7% |

| Adults (Millennials) | 825 | 84.8 (SD 10.8) | Layered Text | 54.8% |

| Adults (Generation X) | 825 | Higher than millennials (β=+.26) | Layered Text with Infographics | Not specified |

Methodology: i-CONSENT Guideline Implementation

The experimental protocol followed a rigorous cross-sectional design across Spain, the United Kingdom, and Romania [26] [18]:

Participant Recruitment:

- Total 1,757 participants: 620 minors, 312 pregnant women, 825 adults

- Adults categorized as Millennials (18-38) and Generation X (39-53)

- Multi-stage sampling ensuring demographic representation

Material Development Process:

- Co-creation Phase: Design thinking sessions with minors and parents; separate sessions with pregnant women; online surveys with adults

- Multidisciplinary Team: Clinical trial physicians, epidemiologists, sociologists, journalists, and nurses

- Format Development: Layered web content, narrative videos, printable documents, and customized infographics

- Translation Protocol: Professional translation to English and Romanian with independent quality review

Assessment Methodology:

- Tool: Adapted Quality of Informed Consent (QuIC) questionnaire

- Objective Comprehension: 22 questions scored as low (<70%), moderate (70%-80%), adequate (80%-90%), or high (≥90%)

- Subjective Comprehension: 5-point Likert scale

- Satisfaction Metrics: Likert scales and usability questions, with ≥80% considered acceptable

- Statistical Analysis: Multivariable regression models identifying demographic predictors

Troubleshooting Guides

Problem: Low comprehension scores among prior trial participants

- Issue: Participants with previous clinical trial experience showed significantly lower comprehension (β=-.47 to -1.77)

- Solution: Implement tailored engagement strategies for returning participants rather than reused generic materials

- Prevention: Develop specific content modules addressing unique concerns of experienced trial participants [26]

Problem: Cultural adaptation gaps in translated materials

- Issue: Romanian participants with lower educational levels showed reduced comprehension (β=-1.05, P=.001)

- Solution: Implement additional cultural localization beyond linguistic translation, focusing on educational accessibility

- Validation: Conduct targeted pilot testing with specific demographic subgroups before full deployment [26]

Problem: Generational comprehension differences in adult populations

- Issue: Generation X adults outperformed millennials despite similar materials

- Solution: Develop generation-specific content variations within adult materials, particularly for complex procedural information

- Format Adjustment: Maintain text-dominant approaches for Generation X while incorporating more visual elements for millennial subgroups [26]

Research Reagent Solutions

Table 2: Essential Research Materials for Demographic Content Testing

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Adapted QuIC Questionnaire | Measures objective and subjective comprehension | Requires demographic-specific customization; validated translations needed |

| Layered Web Content Platform | Digital delivery with modular information access | Supports progressive disclosure of complex information |

| Narrative Video Production | Engages visually-oriented demographics | Storytelling format for minors; Q&A for pregnant women |

| Co-creation Session Protocols | Facilitates participant-led design | Design thinking methods for minors; surveys for adults |

| Multilingual Translation Rubric | Ensures cross-cultural applicability | Native speaker translation with contextual adaptation review |

Methodology Visualization

Research Methodology Workflow

Format Preference Mapping

Overcoming Common Pitfalls in Consent Comprehension

Core Problem & Evidence Base

The Inconsistency of Verbal Descriptors

Using verbal descriptors like "common" or "rare" without numerical frequencies leads to highly variable interpretations among research participants and patients. This variability can compromise the informed consent process in clinical research.

Table 1: Numerical Interpretations of Common Verbal Descriptors

| Verbal Descriptor | EC Guideline Definition | Lay Interpretation Range (Mean) | Reported Participant Preference for Numerical Data |

|---|---|---|---|

| Very Common | ≥ 10% (≥1/10) | Data Not Available | Most participants prefer numerical information, alone or combined with verbal labels [34]. |

| Common | ≥ 1% to < 10% (≥1/100 to <1/10) | Data Not Available | Most participants prefer numerical information, alone or combined with verbal labels [34]. |

| Uncommon | ≥ 0.1% to < 1% (≥1/1,000 to <1/100) | Data Not Available | Most participants prefer numerical information, alone or combined with verbal labels [34]. |

| Rare | ≥ 0.01% to < 0.1% (≥1/10,000 to <1/1,000) | 7% to 21% [34] | Most participants prefer numerical information, alone or combined with verbal labels [34]. |

| Very Rare | < 0.01% (<1/10,000) | Data Not Available | Most participants prefer numerical information, alone or combined with verbal labels [34]. |

Table 2: Impact of Presentation Format on Comprehension and Perception

| Outcome Measure | Verbal Descriptors Alone | Numerical Presentation | Key Evidence |

|---|---|---|---|

| Risk Perception Accuracy | Large overestimation of risk (e.g., 3% - 54%) [35]. | Smaller overestimation (e.g., 2% - 20%) [35]. | Numerical data leads to more accurate risk estimates [35]. |

| Information Satisfaction | Lower satisfaction scores [35]. | Higher satisfaction (MD: 0.48 on Likert scale, p<0.00001) [35]. | Numbers increase satisfaction with the information [35]. |

| Likelihood of Medication Use | Reduced intention for common side effects [35]. | Increased likelihood (e.g., MD: 0.90 for common effects, p<0.00001) [35]. | Numerical presentation supports better decision-making [35]. |

Troubleshooting Guides & FAQs

FAQ: Risk Communication in Informed Consent Forms (ICFs)

Q1: Why is it problematic to use only verbal descriptors like "common" or "rare" for side effects in our consent forms? A1: Verbal descriptors alone are interpreted with extreme variability. For example, the term "rare" can be interpreted as a risk ranging from 7% to 21%, whereas regulatory guidelines define it as 0.01%-0.1% [34]. This leads to participants overestimating their risk, which can affect trial participation and adherence [35].

Q2: What is the most effective way to present risk frequencies? A2: The most effective method is to pair a standard verbal descriptor with its corresponding numerical frequency (e.g., "Common (may affect up to 1 in 10 people)"). This approach improves comprehension accuracy, reduces risk overestimation, and increases participant satisfaction compared to words or numbers alone [35] [36].

Q3: Our ICFs are already long. How can we add numbers without overwhelming participants? A3: Use clear, concise formatting. Present side effects in a bulleted list or table, grouping them by frequency bands (e.g., Very Common, Common) and including the numerical equivalent for each band. This enhances scannability and understanding without significantly increasing length [36].

Q4: What is the current state of risk communication in practice? A4: An evaluation of ICFs from ClinicalTrials.gov found widespread issues. Only 3.6% used European Commission-recommended verbal descriptors with their correct numerical probability, over 20% provided no frequency information at all, and none utilized risk visualizations like icon arrays [36].

Q5: Does improving risk communication really impact participant comprehension? A5: Yes. A systematic review found that while no single strategy is a silver bullet, successful consent processes include various communication modes and one-to-one interaction with someone knowledgeable about the study. Clear risk presentation is a foundational element of this multi-faceted approach [37].

Experimental Protocols for Validation

Protocol 1: Assessing Comprehension of Risk Formats

Objective: To compare participant comprehension, risk perception, and satisfaction between different risk presentation formats in an Informed Consent Form (ICF).

Methodology:

- Design: Randomized Controlled Trial (RCT). Participants will be randomized to receive one of several versions of an ICF for a hypothetical clinical trial.

- Intervention Groups:

- Group A (Verbal Only): Side effects listed using only verbal descriptors (e.g., "Common," "Rare").

- Group B (Numerical Only): Side effects listed using only absolute frequencies (e.g., "Affects 1 in 10 people," "Affects 1 in 1,000 people").

- Group C (Combined): Side effects listed with verbal descriptors paired with numerical frequencies (e.g., "Common (affects 1 in 10 people)").

- Participants: Recruit a sample representative of the target population for clinical trials (e.g., by age, education level, and health literacy).

- Outcome Measures:

- Comprehension: A questionnaire asking participants to estimate the probability of specific side effects.

- Risk Perception: A 6-point Likert scale measuring perceived likelihood of experiencing side effects.

- Satisfaction: A 6-point Likert scale measuring satisfaction with the clarity of the risk information.

- Decision Quality: A measure of the likelihood of participating in the trial.

- Analysis: Compare mean probability estimates, risk perception scores, and satisfaction scores between groups using ANOVA or t-tests. Analyze the correlation between comprehension accuracy and format.

Diagram 1: Risk Format Validation Workflow

Protocol 2: Validating a Standardized Risk Communication Template

Objective: To develop and validate a standardized, accessible template for presenting risk information in ICFs.

Methodology:

- Template Development: Create a template based on best practices:

- Use of bullet points and clear headings.

- Side effects grouped in a table with columns for "Descriptor," "Numerical Frequency," and "What this means."

- Incorporation of icon arrays or bar charts for key risks.

- Use of high-contrast colors and accessible fonts.

- Usability Testing: Conduct cognitive interviews or focus groups with a diverse set of potential participants. Present the template and ask them to "think aloud" as they interpret the risk information.

- Iterative Refinement: Modify the template based on feedback regarding clarity, usability, and perceived comprehensiveness.

- Comparative Evaluation: Use the finalized template in a randomized trial (as in Protocol 1) against a standard, text-heavy ICF.

Diagram 2: Template Validation Process

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Risk Communication Research

| Tool / Resource | Function / Purpose | Example / Application |

|---|---|---|

| Systematic Review Databases | To gather and synthesize existing evidence on risk communication formats and their efficacy. | MEDLINE, Embase, PsycINFO, Cochrane Library [35] [34]. |

| Regulatory Guidelines | Provide standardized definitions for verbal risk descriptors and recommendations for patient information. | European Commission (EC) Guideline on readability, EMA and MHRA guidance documents [35] [36]. |

| Clinical Trial Repositories | Source real-world informed consent forms for evaluation and analysis of current practices. | ClinicalTrials.gov [36]. |

| Accessibility Checkers | Ensure that designed templates (colors, contrasts) are accessible to individuals with visual impairments. | WebAIM Contrast Checker, Venngage Accessible Color Palette Generator [12] [38]. |

| Statistical Analysis Software | To analyze comprehension data, compare intervention groups, and calculate effect sizes. | R, RevMan (for meta-analysis), standard statistical packages (SPSS, SAS) [35]. |

| Survey & Data Collection Platforms | To administer comprehension questionnaires and collect outcome measures from study participants. | Qualtrics, REDCap, Covidence (for systematic review management) [34]. |

Frequently Asked Questions (FAQs) for Research Teams

Q1: What are the core ethical principles that should guide the design of informed consent forms?

A1: The design of informed consent forms should be guided by three core ethical principles derived from the Belmont Report: respect for persons (protecting participant autonomy and ensuring voluntary participation), beneficence (minimizing potential harm and maximizing benefits), and justice (ensuring fair distribution of the burdens and benefits of research) [39] [40]. The consent process must be more than a signature; it should ensure genuine comprehension and voluntary decision-making [39].

Q2: How can we effectively assess if a participant has truly understood the consent information?

A2: True comprehension is a cornerstone of ethical consent. Best practices to assess understanding include:

- Plain Language: Write consent documents at an 8th-grade reading level to accommodate diverse participant populations [41] [39].

- Mixed Presentation Methods: Use a combination of written, oral, and multimedia formats to cater to different learning styles [39].

- Teach-Back Method: Encourage participants to explain the study's key aspects in their own words. This is part of an ongoing consent process, especially for long-term studies, where understanding should be regularly checked and reaffirmed [39].

Q3: What specific strategies can reduce stress and cognitive load for participants during the consent process?

A3: To create a low-stress consent experience, research teams should:

- Use a Clear, Simple Layout: Implement clean layouts with plenty of white space and a logical flow to prevent participants from feeling overwhelmed [42].

- Incorporate Visual Aids: Use diagrams, charts, or icons to illustrate complex procedures, timelines, or key concepts, making the information easier to digest [39].

- Allow Ample Time: Never rush participants. Encourage them to take the documents home and discuss them with family or trusted advisors [39].

- Apply Color Psychology Thoughtfully: Use a calming color palette. Cool tones like blue and green are associated with calmness, trust, and healing, which can help create a reassuring environment [43] [42]. Avoid harsh reds, which can trigger stress or alarm [43].

Q4: What are the common pitfalls in consent form design that can negatively impact health literacy?

A4: Common pitfalls include:

- Use of Technical Jargon: Failing to translate complex medical or technical terms into plain language [41] [39].

- Poor Visual Design: Dense text blocks, low color contrast, and a disorganized structure significantly hinder readability [44].

- Information Overload: Presenting too much information at once without clear prioritization [42].

- Ignoring Digital Accessibility: For digital consent forms, not ensuring compatibility with screen readers or failing to meet WCAG (Web Content Accessibility Guidelines) for color contrast can exclude participants with visual impairments [44].

Q5: How can we ensure our digital consent forms are accessible to individuals with visual impairments or color vision deficiencies?

A5: Ensuring digital accessibility is a legal and ethical requirement. Key actions include:

- Meeting WCAG Contrast Ratios: Adhere to a minimum contrast ratio of 4.5:1 for normal text and 3:1 for large text or user interface components against their background [44].

- Not Relying on Color Alone: Use patterns, text labels, or icons in addition to color to convey information (e.g., for charts or required form fields). This is crucial for the roughly 4.5% of the global population with color vision deficiency [42].

- Using Automated Testing Tools: Leverage tools like WAVE, WebAIM's Contrast Checker, or browser developer tools to identify and fix contrast and other accessibility issues [44].

Troubleshooting Guides for Common Consent Comprehension Challenges

Guide 1: Low Participant Comprehension Scores

- Issue or Problem Statement: Post-consent assessments reveal that participants have a poor understanding of the study's purpose, procedures, risks, or rights.

- Symptoms or Error Indicators:

- Participants cannot correctly answer basic questions about the study.

- High rates of participant withdrawal shortly after consent.

- Participants express surprise or confusion about study procedures they agreed to.

- Possible Causes:

- Consent form is written at a reading level that is too high.

- The document is overly long and complex.

- Key information is not effectively highlighted.

- The consent process is rushed.

- Step-by-Step Resolution Process:

- Diagnose the Problem: Use a readability tool (e.g., Flesch-Kincaid) to check the form's grade level. Aim for 8th grade or lower [39].

- Simplify Language: Rewrite complex sentences and replace jargon with plain language. For example, use "heart attack" instead of "myocardial infarction" [41].

- Restructure for Clarity: Use the "Key Information" requirement of the 2018 Common Rule as a guide. Begin the form with a concise summary of the most important elements [41].

- Implement a Teach-Back Protocol: Train research staff to ask participants to explain the study in their own words, which helps identify and clarify points of confusion [39].

- Validate the Revisions: Test the revised form and process with a small, representative group before full implementation.

- Escalation Path or Next Steps: If comprehension scores remain low despite revisions, consult with a health literacy expert or bioethicist for a specialized review.

- Validation or Confirmation Step: Post-implementation, re-administer the comprehension assessment. Successful resolution is indicated by a significant improvement in average scores.

Guide 2: High Participant Anxiety and Drop-Out During Consent

- Issue or Problem Statement: Participants exhibit signs of stress or anxiety during the consent discussion, leading to hesitation or refusal to enroll.

- Symptoms or Error Indicators:

- Verbal or non-verbal expressions of worry or being overwhelmed.

- Participants focus excessively on potential risks.

- A higher-than-expected rate of decline to participate.

- Possible Causes:

- The language used is alarming or overly focused on risks.

- The visual design of the material (e.g., color, layout) is subconsciously stressful.

- The environment or researcher's demeanor is not supportive.

- Step-by-Step Resolution Process:

- Audit Content Tone: Review the consent form to ensure risks are presented in a balanced, factual manner without sensationalism.

- Apply Color Psychology: Incorporate a calming color palette. Blues and greens can promote feelings of trust and calm, while harsh reds should be avoided in non-urgent contexts [43] [42].

- Optimize Visual Design: Use a clean, uncluttered layout with ample white space. Break text into manageable sections with clear, descriptive headings [42].

- Train Research Staff: Ensure staff are trained in clear communication and empathetic interaction. They should encourage questions and reassure participants that their comfort is a priority [39].

- Provide a "Cooling-Off" Period: Explicitly encourage participants to take the document home and confirm their decision later, reducing pressure [39].

- Validation or Confirmation Step: Monitor participant feedback and drop-out rates. A successful intervention will lead to qualitative feedback about a more comfortable experience and a reduction in anxiety-related declines.

Quantitative Data on Digital Health Consent

The following tables summarize key quantitative findings from recent research into the completeness of informed consent forms (ICFs) for digital health studies.

Table 1: Completeness of Consent Forms in Digital Health Research

Summary of a review of 25 real-world Informed Consent Forms (ICFs) for adherence to ethical elements, highlighting significant gaps in participant protection [40].

| Metric | Value | Context / Implication |

|---|---|---|

| Highest Completeness Score | 73.5% | Even the best-performing ICF was missing over a quarter of the required/recommended ethical elements [40]. |

| Full Adherence to Framework | 0% | None of the 25 reviewed ICFs fully adhered to all required ethical elements, revealing systemic gaps [40]. |

| Major Gap Area | Technology-specific risks | Consent forms were particularly poor at conveying risks related to data privacy, reuse, and third-party technology involvement [40]. |

Table 2: Key Attributes of a Comprehensive Digital Health Consent Framework

Essential domains and attributes identified for a robust ethical framework, extending beyond traditional consent to address digital-specific challenges [40].

| Framework Domain | Description | Example Attributes |

|---|---|---|

| Consent | Fundamental aspects of research participation. | Study purpose, benefits, compensation, voluntary participation, right to withdraw [40]. |

| Grantee (Researcher) Permissions | What researchers are allowed to do with participant data and biospecimens. | Types of analyses (e.g., genomic), future use permissions, data sharing with collaborators [40]. |

| Grantee (Researcher) Obligations | Responsibilities researchers must fulfill to protect participants. | Data storage and security, information confidentiality, result sharing, managing Incidental Findings [40]. |