Beyond the Paper Form: How Multimedia and Digital Tools are Revolutionizing Informed Consent in Clinical Research

This article explores the transformative potential of multimedia and digital tools in enhancing the informed consent process for clinical research and healthcare.

Beyond the Paper Form: How Multimedia and Digital Tools are Revolutionizing Informed Consent in Clinical Research

Abstract

This article explores the transformative potential of multimedia and digital tools in enhancing the informed consent process for clinical research and healthcare. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive analysis spanning from the foundational challenges of traditional consent to the practical application of digital solutions like interactive apps, short-form videos, and AI-based tools. It synthesizes recent evidence on their efficacy in improving participant comprehension, satisfaction, and engagement, while also addressing key implementation challenges such as health literacy, accessibility, and data security. The article further offers strategic recommendations for optimizing consent forms and processes, supported by comparative data on outcomes, to guide the successful and ethical integration of these technologies into modern research protocols.

The Why: Understanding the Critical Need for Modernizing Informed Consent

Identifying the Shortfalls of Traditional Paper-Based Consent

Core Deficiencies of the Paper-Based Consent Pathway

Traditional paper-based informed consent is prone to several critical failures that can compromise patient understanding, create operational inefficiencies, and increase institutional liability.

- Comprehension and Legibility Issues: Paper forms are often written in complex language, leading to uninformed consent as patients may not read them thoroughly. Hand-written forms can be illegible, with studies finding missing patient information in 10% of forms and missing procedure details in 30% [1] [2].

- Operational Inefficiencies and Costs: The paper process wastes administrative and clinical staff time due to manual data collection and re-entry. This causes duplication of effort, massive paper and handling costs (estimated at $4-7 per form), and frequently results in lost or misfiled documents [1]. One study found that two-thirds of patients were missing signed consent forms at surgery, which delayed procedures in 14% of cases [1].

- Inadequate Standardization and Personalization: Paper forms lack standardization, which can lead to errors of omission. They are also inflexible, making it difficult to tailor information to individual patient factors such as age, education level, cultural background, or language [2] [3].

- Process Breakdowns and Liability: The physical nature of paper forms makes them susceptible to being damaged, lost, or misfiled. Inadequate documentation of the consent discussion can lead to greater liability exposure and potential litigation [1] [4].

Quantitative Analysis: Paper vs. Digital Consent

The following tables summarize key quantitative findings from research comparing paper-based and digital consent pathways.

Table 1: Operational and Cost Impact of Paper-Based Consent

| Shortfall Metric | Quantitative Finding | Source / Context |

|---|---|---|

| Missing Consent Forms | 66% of patients at surgery | Study cited by IngeniousMed [1] |

| Procedure Delays | 14% of total surgical cases | Study cited by IngeniousMed [1] |

| Handling Cost per Form | $4 - $7 (approx. £4-7) | IngeniousMed & UK NHS micro-costing study [1] [4] |

| Printing Cost per Form | ~$1 (approx. £0.90) | IngeniousMed & UK NHS micro-costing study [1] [4] |

| Forms with Missing Details | 30% missing procedure details | Study on hand-written surgical consent forms [1] |

Table 2: Comparative Workflow Analysis

| Process Step | Paper-Based Pathway | Digital Pathway |

|---|---|---|

| Form Creation & Access | Physical printing, risk of using outdated versions [1] | Dynamic, version-controlled templates accessible on any device [1] |

| Form Completion | Hand-written, prone to illegibility and omission [4] | Automated data population; standardized, legible data fields [1] |

| Storage & Transportation | Physical transportation to and from storage is required [4] | Instant, secure digital storage; no physical transport needed [4] |

| Patient Review | Requires physical presence; limited access to supplementary resources [1] | Can be completed remotely; can link to additional learning resources [1] [4] |

| Audit & Compliance | Manual, time-consuming retrieval and checking [1] | Integrated analytics and easy auditing capabilities [1] |

Experimental Protocol: Micro-Costing a Consent Pathway

Aim: To quantitatively compare the resource utilization and costs associated with paper-based versus digital consent pathways in a clinical setting.

Methodology (based on UK NHS micro-costing study [4]):

- Pathway Mapping: Model the process steps for both paper and digital consent using a decision-tree structure.

- Paper Pathway: Printing -> Consent (pre-surgery) -> Storage -> Consent (day of surgery) -> Pre-op Check.

- Digital Pathway: Consent (pre-surgery) -> Consent (day of surgery) -> Pre-op Check.

- Cost Identification: Identify all costs associated with each process step. For paper, this includes the costs of printing, physical storage, and transportation of forms. For digital, this includes platform licensing fees.

- Time Measurement: Measure the time spent by clinical and administrative staff on each step of the consent process. Consultation duration is a key cost driver.

- Data Collection: Collect data from a representative surgical department (e.g., 110 consent procedures per month) over a defined period.

- Analysis: Calculate the total cost per consent episode for each pathway. Conduct sensitivity analyses to identify the most influential cost drivers (e.g., consultation time, form loss rate).

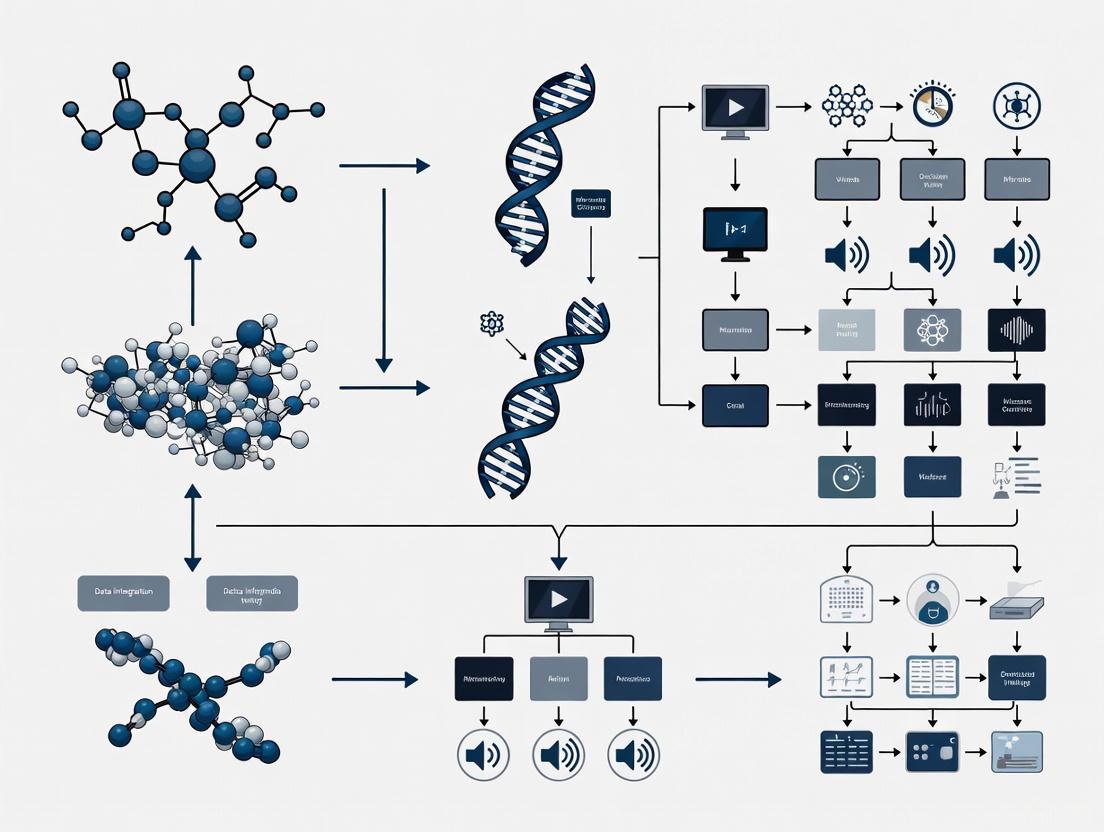

Digital Consent Workflow

The following diagram illustrates the streamlined workflow of a digital consent platform compared to the traditional paper-based process, highlighting the reduction in redundant steps.

Technical Support & Troubleshooting Guide

FAQ 1: What is the most significant operational shortfall of paper consent? Answer: The high rate of missing or incomplete forms is a critical failure. Evidence shows that for two-thirds of patients, the signed paper consent form is missing at the time of surgery, directly leading to delayed procedures in 14% of cases [1]. This disrupts surgical schedules, wastes valuable resources, and causes patient stress.

FAQ 2: How does paper consent fail to ensure genuine patient understanding? Answer: It fails in two key ways:

- Comprehension Barrier: Forms often use complex clinical jargon and are not designed with health literacy principles in mind, making them difficult for patients to understand [2] [3].

- Lack of Personalization: Paper is a static, one-size-fits-all medium. It cannot easily adapt to provide information in different languages, accommodate varying health literacy levels, or highlight risks most relevant to a patient's specific co-morbid conditions [2].

FAQ 3: What are the hidden costs of a paper-based system? Answer: Beyond obvious printing costs (~$1 per form), the largest expenses are associated with staff time for handling ($4-7 per form) and managing the consequences of failure, such as rescheduling surgeries due to lost forms. A UK NHS study found the total cost per consent episode was approximately £0.90 more for paper than for digital [1] [4].

FAQ 4: How does paper consent increase legal and compliance risks? Answer: Illegible handwriting, missing information, and the inability to prove that a proper discussion took place increase liability exposure [1]. In contrast, digital platforms can provide an audit trail and consistently capture what was explained to the patient, offering stronger legal protection. One analysis suggested preventing a single litigation claim could save a health system over £200,000 [4].

The Scientist's Toolkit: Research Reagent Solutions

| Item / Solution | Function in Consent Research |

|---|---|

| eConsent Platform | A software solution that digitizes the entire consent lifecycle, from dynamic form generation and remote signing to seamless integration with Electronic Medical Records (EMRs) for secure storage and auditing [1]. |

| Health Literacy Assessment Tools | Validated instruments (e.g., readability scores, patient comprehension questionnaires) used to evaluate and improve the clarity and accessibility of consent form content for diverse populations [3]. |

| Micro-Costing Framework | A research methodology to meticulously identify, measure, and value all resources (e.g., staff time, materials) used in the paper and digital consent pathways for a robust economic comparison [4]. |

| Web Accessibility Evaluation Tools | Software (e.g., axe DevTools, WAVE) that automatically checks digital consent forms against standards like WCAG to ensure sufficient color contrast and usability for people with visual impairments [5] [6]. |

| Usability Testing Protocol | A structured method involving participants from the target study population to test draft consent forms and processes, identifying points of confusion and ensuring materials are user-friendly before full deployment [3]. |

Frequently Asked Questions & Troubleshooting Guides

This technical support center addresses common challenges researchers face when developing and evaluating multimedia tools for enhancing informed consent. The guidance is framed within the context of a broader thesis on leveraging technology to bridge health literacy gaps in clinical research.

How can I quickly assess and improve the readability of my consent forms?

The Challenge: Consent forms are consistently written at reading levels that exceed the average patient's comprehension. A 2024 study found that surgical consent forms from 15 academic medical centers had a median Flesch-Kincaid Reading Level of 13.9 (college freshman level), while the average American reads at an 8th-grade level [7].

The Solution: Implement a structured AI-human expert collaborative approach to simplify language while preserving clinical and legal accuracy [7].

Experimental Protocol:

- Step 1: Baseline Assessment. Use readability metrics (e.g., Flesch-Kincaid Grade Level, Flesch Reading Ease) to quantify the problem.

- Step 2: AI Facilitation. Process the text through a Large Language Model (LLM) like GPT-4 with prompts focused on simplifying language, reducing sentence length, and minimizing passive voice.

- Step 3: Expert Validation. Have both medical content experts and a legal professional (e.g., a malpractice defense attorney) independently review the simplified form to ensure clinical and legal sufficiency is maintained [7].

- Step 4: Final Evaluation. Re-calculate readability metrics to confirm improvement. The aforementioned study achieved a significant reduction to an 8.9 grade level (P=0.004) [7].

Quantitative Results of AI-Human Collaborative Simplification:

| Readability Metric | Before Simplification | After Simplification | P-value |

|---|---|---|---|

| Flesch-Kincaid Grade Level | 13.9 (College Freshman) | 8.9 (8th Grade) | P = 0.004 |

| Average Reading Time | 3.26 minutes | 2.42 minutes | P < 0.001 |

| Percentage of Passive Sentences | 38.4% | 20.0% | P = 0.024 |

| Word Rarity (Frequency Score) | 2845 | 1328 | P < 0.001 |

Beyond readability, what other factors influence participant preference for consent materials?

The Challenge: Readability is crucial but not the sole determinant of an effective consent process. Prospective participants have varying preferences based on content, demographics, and information needs [8].

The Solution: Adopt a human-centered design approach that goes beyond readability scores to evaluate how consent materials elicit informed questions and cater to subgroup preferences [8].

Experimental Protocol:

- Step 1: Snippet Creation. Break down the consent form into logical, paragraph-length sections ("snippets"). For each, create two versions: the original IRB-approved text and a modified version optimized for readability.

- Step 2: Participant Survey. Recruit eligible participants and present them with paired snippets (original vs. modified), asking for their preference.

- Step 3: Quantitative & Qualitative Analysis. Analyze preferences quantitatively and collect open-ended feedback. Key findings from a 2025 study include [8]:

- Participants significantly preferred shorter text snippets, especially for sections explaining study risks.

- Older participants were 1.95 times more likely to prefer the original, more detailed text (P=0.004).

- The modified snippets often elicited new, informed questions not addressed in the original material.

Key Insight: The effectiveness of consent communication can be measured by its likelihood to elicit "informed questions" from potential participants, a metric that goes beyond simple comprehension checks [8].

How do I address the unique ethical and transparency risks of digital health technologies in consent?

The Challenge: Traditional consent forms fail to adequately address risks specific to digital health research (DHT), such as data privacy, third-party sharing, and commercial reuse. A 2025 review of 25 real-world digital health consent forms found that none fully adhered to required or recommended ethical elements, with the highest completeness for required attributes reaching only 73.5% [9].

The Solution: Implement a comprehensive ethical consent framework tailored to DHT, expanding on guidance from bodies like the NIH Office of Science Policy [9].

Experimental Protocol for Framework Adherence:

- Step 1: Framework Development. Create a structured framework of consent attributes. The referenced study developed a framework with 63 attributes and 93 sub-attributes across four domains: Consent, Grantee (Researcher) Permissions, Grantee Obligations, and Technology [9].

- Step 2: Gap Analysis. Systematically review your consent form against the framework to identify missing elements. Pay special attention to technology-specific clauses.

- Step 3: Incorporate Critical Elements. Ensure the form includes often-missing but ethically salient elements, such as [9]:

- Commercial Profit Sharing: Will participants share in profits from commercialized outcomes?

- Study Information Disclosure: A clear plan for disclosing study information updates.

- During-study Result Sharing: How and when interim results will be shared with participants.

- Data Removal Requests: The process for requesting data deletion.

What is the role of multimedia and interactivity in improving comprehension?

The Challenge Text-heavy, static consent forms can overwhelm participants and fail to facilitate true understanding.

The Solution: Leverage enhanced eConsent tools that incorporate interactive elements, which have been shown to improve understanding and confidence in study decisions [10].

Experimental Protocol:

- Step 1: Tool Selection. Utilize eConsent platforms that support embedded videos, interactive e-calendars for visit schedules, and real-time comprehension checks.

- Step 2: Comparative Testing. Conduct pilot studies comparing participant comprehension and satisfaction between text-only eConsent and multimedia-enhanced eConsent approaches.

- Step 3: Measure Outcomes. Key results from a pilot study showed that multimedia-enhanced eConsent significantly improved participant understanding and engagement. These tools help transform the consent process from a one-time transaction into an ongoing, technology-assisted conversation [10].

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources and methodologies for developing and testing enhanced informed consent processes.

| Research Reagent / Solution | Function & Explanation |

|---|---|

| Readability Analysis Software | Tools like online readability calculators quantitatively assess text difficulty using metrics such as Flesch-Kincaid Grade Level and Flesch Reading Ease, providing a baseline for simplification efforts [8]. |

| Large Language Models (LLMs) | AI models such as GPT-4 can efficiently simplify complex consent language, reduce word count, and restructure passive sentences, serving as a powerful first-pass tool in an AI-human collaborative framework [7]. |

| Validated Consent Evaluation Rubric | Structured rubrics, such as the 8-item tool used to score AI-generated consent forms perfectly (20/20), provide a systematic method for evaluating the completeness and quality of consent documents [7]. |

| Enhanced eConsent Platforms | Digital platforms that host interactive consent materials, including videos, quizzes, and clickable glossaries, to improve participant comprehension and engagement through multimedia learning [10]. |

| Structured Ethical Framework | A comprehensive checklist of consent attributes (e.g., covering data storage, third-party sharing, profit models) ensures all ethical risks, especially those related to digital health technologies, are transparently communicated [9]. |

| Health Literacy & Digital Literacy Support | Training materials and dedicated support for participants are essential, as modern consent now requires both health and digital literacy to navigate technologies and understand digital formats [10]. |

Experimental Workflow for Consent Form Optimization

The diagram below outlines a logical workflow for optimizing informed consent forms, integrating AI simplification with rigorous expert validation.

Troubleshooting Guide: Time Constraint Challenges

Frequently Asked Questions

Q1: Our research team experiences significant time pressure during participant enrollment and the informed consent process. What are the core components of this time constraint? Time constraints in a project environment consist of several key components that create pressure on the research timeline [11]:

- Project Timeline: The overall timeframe for a study, including start date, end date, and critical milestones such as enrollment targets.

- Work Hours Allocation: The number of hours clinicians and staff can dedicate to consent-related tasks amidst other clinical duties.

- Internal Calendars: Organizational schedules, holidays, and staff availability that can limit the time available for research activities.

- Planning and Strategy Time: Sufficient time must be allocated for study planning and protocol development; rushed planning often leads to oversights and greater delays later [11].

Q2: Are there different types of time constraints we should plan for? Yes, time constraints generally fall into two categories, each with different origins [11]:

| Constraint Type | Description | Examples in Clinical Research |

|---|---|---|

| Internal Constraints | Originate from within the organization or team. | Limited staff availability, competing clinical duties, institutional review board (IRB) submission schedules, internal grant deadlines. |

| External Constraints | Originate from outside the organization and are often beyond direct control. | Funding agency deadlines, regulatory submission timelines, contractually obligated project milestones, sponsor-imposed enrollment deadlines. |

Q3: What practical strategies can we use to manage these time constraints effectively? Managing time constraints requires a proactive approach [11]:

- Spend Time on Project Planning: Invest adequate time upfront in creating a comprehensive research plan with clear goals and realistic time estimates to prevent backtracking and wasted effort.

- Create Realistic Schedules: Develop schedules that account for potential delays and avoid over-optimism. Use historical data from similar studies to inform your timeline.

- Track Time: Actively monitor the time spent on research tasks against the planned schedule. Small delays can accumulate and impact later stages.

- Delegate and Empower Team Members: Delegate tasks to qualified team members to avoid bottlenecks and boost morale.

Q4: Our clinical staff reports high levels of strain. When do these strain episodes typically occur? Research shows that strain is not constant and varies across different phases of clinical work and among professional roles. A study in operating rooms found that strain levels significantly vary across the phases of an operation and between different professional groups (e.g., surgeons, anesthesiologists, nurses) [12]. For instance, surgeons often report more strain during the first and middle thirds of an operation, while other groups experience different patterns [12]. This suggests that high-strain phases requiring intense concentration are not uniform for all team members.

Q5: Can technology help alleviate time pressures in the informed consent process? Yes, digital tools show significant promise. Digitalizing the informed consent process can enhance patients' understanding of procedures, risks, and benefits [2]. For healthcare professionals, time savings are a major benefit of these digital systems [2]. Tools like the Virtual Multimedia Interactive Informed Consent (VIC) use iPads with a multimedia library to explain risks and benefits, incorporating features like a 'teach-back' process to enhance patient comprehension and potentially streamline the workflow for staff [13].

Experimental Protocols and Workflows

Quantitative Data on Operational Strain

The following data is derived from a study analyzing 693 guided recalls from operating room team members after 113 operations, providing a quantitative basis for understanding strain patterns [12].

Table 1: Mean Duration of Operations by Surgery Type [12]

| Surgery Type | Number of Operations | Mean Duration (Minutes) | Standard Deviation |

|---|---|---|---|

| Pediatric | 23 | 49.09 | 40.34 |

| Gynecology | 23 | 109.43 | 92.31 |

| General Surgery | 22 | 82.64 | 59.98 |

| Trauma/Emergency | 23 | 82.30 | 44.19 |

| Vascular | 22 | 68.14 | 44.03 |

| Total | 113 | 78.37 | 61.00 |

Table 2: Strain Variation Across Surgical Phases and Professions [12] Statistical analysis using General Linear Modeling (GLM) revealed:

| Factor | Statistical Significance | Findings |

|---|---|---|

| Variation across surgical phases | Quadratic (F=47.85, p<0.001) and Cubic (F=8.94, p=0.003) effects | Strain is not constant; it fluctuates in a predictable pattern across phases (before incision, first, middle, and last third of surgery). |

| Variation across professional groups | Linear (F=4.14, p=0.001) and Quadratic (F=14.28, p<0.001) effects | Different roles (e.g., surgeons vs. anesthesiologists) experience strain differently throughout an operation. |

| Variation across surgery types | Cubic effects (F=4.92, p=0.001) | The pattern of strain also depends on the type of surgery being performed. |

Methodology: Guided Recall for Strain Assessment

This protocol is adapted from a study on episodic strain in clinical settings [12].

- Objective: To assess the experience of strain from the perspective of clinical team members in relation to procedural phases.

- Design: Prospective, observational, guided recall study immediately following clinical procedures.

- Data Collection:

- Tool: A guided recall method integrated into a short questionnaire.

- Procedure: Immediately after a procedure, each team member individually draws a line on a graph representing the strain moments they experienced. The x-axis represents the procedure timeline (from pre-incision to end), and the y-axis represents the strain level.

- Qualitative Data: Participants describe the nature of each tense moment.

- Data Preparation:

- Translate drawings into numerical values based on the peak and slope of the curve.

- Define procedural phases (e.g., before incision, first third, middle third, last third).

- For each participant, calculate the total number of strain episodes per phase.

- Statistical Analysis: Analyze data using General Linear Modeling (GLM) with repeated measures to compare strain across phases, professional groups, and procedure types.

Workflow and System Diagrams

Five Steps to Manage Constraints

Digital Consent Alleviates Strain

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Research on Time Constraints and Digital Consent

| Item | Function/Description | Application in Research |

|---|---|---|

| Guided Recall Protocol | A method where participants retrospectively chart their strain levels on a timeline after a task. | Quantifying temporal patterns of strain experienced by clinicians and staff during complex procedures [12]. |

| Virtual Multimedia Interactive Informed Consent (VIC) | An mHealth tool using iPads and a multimedia library to explain risks, benefits, and alternatives of a procedure. | Serves as both an intervention to streamline the consent process and a tool to study improvements in patient comprehension and workflow efficiency [13]. |

| General Linear Modeling (GLM) | A statistical framework for modeling the relationship between a dependent variable and one or more independent variables. | Analyzing how strain (dependent variable) varies across procedural phases, professional groups, and surgery types (independent variables) [12]. |

| Semi-Structured Interview Guides | A qualitative research tool with a flexible set of open-ended questions to explore a topic. | Gathering in-depth, contextual data from clinicians about triggers and experiences of strain moments that surveys may not capture [12]. |

| Throughput Accounting Metrics | An alternative accounting methodology focusing on Throughput, Investment, and Operating Expense. | Measuring the performance and financial impact of interventions designed to alleviate time constraints, focusing on increasing throughput rather than just cutting costs [14]. |

Technical Support Center: Troubleshooting Multimedia Consent Tools

Frequently Asked Questions (FAQs)

Q1: Our participants are showing poor comprehension of complex concepts like randomization. How can multimedia tools help? A: Research demonstrates that participants often score lowest on questions about randomization [15]. Multimedia tools address this by using animated videos and interactive diagrams to visually explain the process of random group assignment. This transforms an abstract concept into a tangible process participants can see and understand. Studies show that presenting information via animated video is significantly more effective than plain text or audio narration alone [16].

Q2: We work with low-literacy populations. Can digital consent tools be effective? A: Yes. International guidelines specifically recommend alternative consent procedures for low-literacy settings where written information does not guarantee comprehension [15]. A pilot study of a multimedia tool in The Gambia, where adult literacy is less than 30%, found that 70% of participants reported the tool was clear and easy to understand. Furthermore, participants' comprehension scores for "recall" and "understanding" showed statistically significant improvements between initial and follow-up visits [15].

Q3: Are researchers and Institutional Review Boards (IRBs) receptive to replacing paper documents with digital systems? A: While researchers and IRB members find digital systems valuable for improving understanding and reducing patient stress, they often have concerns. These include how to review the system for potential biases in presentation and the legal issues associated with replacing the paper document entirely [17]. A phased approach, using the digital tool to augment rather than immediately replace the traditional process, can help build institutional comfort.

Q4: Does using a more engaging, multimedia format unduly influence or coerce participants into consenting? A: This is a key ethical consideration. The available research from randomized controlled trials suggests that enhancing the consent process to provide more useful information for decision-making does not affect the clinical trial entry decision [17]. The goal is to facilitate genuine understanding, not to persuade.

Troubleshooting Common Technical and Process Issues

Issue: Participant is anxious or struggles to use the tablet interface.

- Diagnosis: Human factors and accessibility challenge.

- Solution:

- Emppathize and assure: Position yourself as an advocate. Use phrases like, "I understand this can be frustrating, let's work through this together" [18].

- Simplify the interface: Ensure the application offers a simple, linear navigation path for first-time users.

- Provide support: Be present to offer assistance, but allow the participant to control the device to maintain their sense of autonomy [17].

Issue: Unable to verify if a participant has understood the key study information.

- Diagnosis: Lack of integrated comprehension assessment.

- Solution:

- Implement in-app quizzes: Use automated, brief quizzes on crucial domains like risks and voluntary participation to gauge understanding [16].

- Employ the teach-back method: Ask participants to explain the study in their own words. The multimedia tool can facilitate this by providing clear, consistent information for them to summarize [16].

- Adopt a multi-step approach: Combine the multimedia presentation with a verbal discussion to reinforce key points, a method shown to be particularly useful for elderly or cognitively impaired participants [17].

Issue: The consent process still takes too long, creating bottlenecks in recruitment.

- Diagnosis: Inefficient process flow.

- Solution:

- Leverage self-paced learning: Allow participants to review all or parts of the consent material independently on a tablet before the formal consent discussion. A randomized controlled trial found that users of a multimedia tool reported a shorter perceived time to complete the process [16].

- Use a modular design: Structure the information in the tool hierarchically, allowing participants to dive deeper into complex topics (like side effects) while skimming more straightforward ones [17]. This is more efficient than a linear, paper-based reading.

Experimental Protocols & Research Data

The table below synthesizes quantitative data from pivotal studies evaluating multimedia informed consent tools against traditional paper-based methods.

Table 1: Comparison of Multimedia vs. Paper-Based Informed Consent Processes

| Study & Design | Participant Group | Key Comprehension Findings | Satisfaction & Usability Findings |

|---|---|---|---|

| RCT of VIC Tool [16]\n(Randomized Controlled Trial) | 50 participants in a real-world biorepository study (n=25 VIC, n=25 paper). | Both groups showed high comprehension. | - Higher satisfaction in VIC group.\n- Higher perceived ease of use with VIC.\n- Shorter perceived time to complete consent with VIC. |

| Pilot of Multimedia Tool [15]\n(Pre-Post Pilot Study) | Low-literacy participants in The Gambia. | Statistically significant increases in mean scores for 'recall' (F(1,41)=25.38, p<0.00001) and 'understanding' (F(1,41)=31.61, p<0.00001) between first and second visits. | 70% of participants reported the multimedia tool was clear and easy to understand. |

| Needs Assessment [17]\n(Focus Groups & Interviews) | Patients with depression, breast cancer, or schizophrenia; researchers; IRB members. | Patients felt multimedia (video) made information more understandable. | Patients reported the process would be less stressful and provide a greater sense of control when using a self-paced multimedia system. |

Detailed Methodology: Randomized Controlled Trial of a Multimedia Tool (VIC)

Objective: To evaluate the feasibility of the Virtual Multimedia Interactive Informed Consent (VIC) tool and compare it with traditional paper-based methods in an ongoing, real-world study (GenEx 2.0) [16].

Tool Design: The VIC tool was developed based on user-centered design and Mayer’s cognitive theory of multimedia learning. It featured:

- A comprehensive multimedia library (video clips, animations, presentations).

- Virtual coaching with automated text-to-speech.

- Interactive elements allowing participants to navigate sections freely.

- Automated quizzes to assess comprehension.

- Electronic signature capabilities [16].

Participant Recruitment:

- Source: Recruited from the Winchester Chest Clinic and surrounding community.

- Eligibility: Adults >21 years, English-speaking, providing an email, and willing to use an iPad. Computer literacy was not required [16].

Randomization Protocol:

- Eligible participants were randomized 1:1 to the VIC (intervention) or paper (control) arm.

- A computer algorithm using the minimization method was used to ensure balance on demographics (gender, race, education, etc.) due to the small sample size [16].

Outcome Measures:

- Primary: Comprehension of study information.

- Secondary: Participant satisfaction, perceived ease of use, ability to complete consent independently, and perceived time to complete the process. Outcomes were collected via coordinator-administered questionnaires post-consent [16].

Visualizing the Workflow: From Development to Implementation

The following diagram illustrates the end-to-end process of developing, testing, and implementing a multimedia consent tool, based on the methodologies cited.

Multimedia consent tool development and implementation workflow.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Research Reagents and Materials for Multimedia Consent Research

| Item | Function in Research |

|---|---|

| Tablet Computer (e.g., iPad) | The primary hardware for delivering the interactive consent application. Allows for self-paced review and can be used in various clinical settings [16]. |

| Multimedia Authoring Software | Software used to create and integrate multimedia elements (video, animations, audio) into the consent tool, based on principles of cognitive theory [16]. |

| Digital Video Recording Equipment | Used to film role-played scenarios that explain study procedures, risks, and benefits in a relatable, context-specific manner [15]. |

| Audio Recording & Translation Files | Professionally translated and recorded audio narrations in local languages are crucial for low-literacy and non-English speaking populations [15]. |

| Randomization Software/Algorithm | Essential for conducting rigorous RCTs. Minimization algorithms can balance groups on key demographic variables in smaller studies [16]. |

| Validated Digital Questionnaire | A digitized audio questionnaire can be used to assess participant comprehension, especially in low-literacy settings where written tests are not feasible [15]. |

| Web-Based Coaching/Avatar System | A virtual coach or avatar that guides participants through the consent form using text-to-speech, improving engagement and understanding [16]. |

| Electronic Signature System | Allows for seamless and secure documentation of consent within the digital tool, with potential for integration into electronic health records [16]. |

Core Technical Specifications for Accessible Design

Adherence to technical standards is critical for ensuring tools are usable by all participants, including those with visual impairments.

Table 3: WCAG 2.2 Level AA Color Contrast Requirements

| Element Type | Minimum Contrast Ratio | Example Requirement |

|---|---|---|

| Normal Text | 4.5:1 | Text with color #666 on a white (#FFF) background fails (5.7:1), while #333 passes (12.6:1) [5] [19]. |

| Large Text (≥18.66px or ≥14pt & Bold) | 3:1 | 18pt text in #000 on a #777 background has a 4.6:1 ratio, which passes the enhanced 4.5:1 requirement for large text [5] [20]. |

| User Interface Components | 3:1 | Borders, buttons, and other visual indicators required to understand a component must meet this minimum [20]. |

| Graphics & Charts | 3:1 | Essential for understanding conveyed by these non-text elements, such as the colors used in a flowchart [20]. |

Logic for testing text contrast against WCAG Level AA requirements.

The How: A Practical Guide to Implementing Multimedia Consent Tools

Troubleshooting Guides and FAQs for Digital Informed Consent Platforms

This technical support center addresses common issues researchers and professionals might encounter when implementing multimedia tools for informed consent in clinical and public health research.

Frequently Asked Questions (FAQs)

Q1: Our participants have varying levels of digital literacy. How can we ensure our digital consent tool is accessible to everyone? A1: Implement a hybrid consent approach. Offer both digital and paper-based options, allowing participants to choose based on their comfort and accessibility [21]. Furthermore, ensure your digital platform adheres to accessibility standards, such as providing high color contrast (a minimum ratio of 4.5:1 for standard text) and text-to-speech functionality to support those with visual impairments or lower literacy levels [22] [16].

Q2: We are using an eConsent app, but our post-test comprehension scores are lower than expected. What can we do? A2: Comprehension is multi-factorial. Consider enhancing your platform with two key features derived from successful trials:

- Integrated Quizzes and Teach-Back: Incorporate automated, low-stakes quizzes throughout the consent process to reinforce understanding and identify areas that need more clarification [16].

- Multimedia Explanations: Replace or supplement dense text with animated videos. Studies show that viewers retain 95% of a message from video compared to 10% from text, making complex topics like randomization or data handling easier to grasp [23].

Q3: Our ethics committee has concerns about replacing the paper consent form. How should we address this? A3: Engage with your ethics board early in the process. Acknowledge their valid concerns, which often center on legal acceptance, potential biases in multimedia presentation, and ensuring participant comprehension [17]. You can present evidence from randomized controlled trials that show digital tools can lead to high comprehension and greater participant satisfaction [16]. Proposing a pilot study comparing digital and paper methods can also provide local data to alleviate concerns.

Q4: Is it feasible to obtain valid informed consent remotely for a fully decentralized trial? A4: Yes, with careful planning. The FDA and EMA define electronic informed consent (eConsent) as the use of electronic systems to convey information and obtain consent, which can be done remotely [21]. The key is to maintain interaction. For fully remote processes, schedule a video consultation where the research team can discuss the trial, answer questions, and ensure the participant understands the material, mirroring the traditional face-to-face interaction [21].

Technical Troubleshooting Guide

| Issue | Possible Cause | Solution |

|---|---|---|

| Participants report that the text is difficult to read on screen. | Insufficient color contrast between text and background. | Use a color contrast checker to ensure a ratio of at least 4.5:1 for standard text and 3:1 for large-scale text. Use high-contrast color pairs like dark gray (#333) on white (#FFF) [22]. |

| Low participation rates for a digitally presented consent. | The tool may be perceived as impersonal or may skew towards digitally literate users only. | Adopt a participant-centered design. Use a hybrid approach combining digital tools with personal interaction [21] [24]. Ensure the platform is available in multiple languages and uses a simple, intuitive interface [24]. |

| Difficulty tracking participant understanding during the remote consent process. | The digital process lacks mechanisms to assess comprehension in real-time. | Utilize built-in features like interactive quizzes and the "teach-back" method, where participants explain concepts in their own words, to gauge and improve understanding dynamically [16]. |

| Legal and administrative concerns about electronic signatures and audit trails. | Uncertainty about regulatory acceptance of digital records. | Use an eConsent system that automatically records a secure, time-stamped audit trail of the participant's interaction with the consent materials, providing a robust documentation chain for inspectors and sponsors [21]. |

Experimental Protocols and Data from Key Studies

The following table summarizes quantitative data from seminal studies investigating digital informed consent tools, providing a benchmark for your own experiments.

Table 1: Quantitative Outcomes from Digital Consent Feasibility Studies

| Study & Digital Tool | Design | Key Quantitative Findings |

|---|---|---|

| Virtual Multimedia Interactive Informed Consent (VIC) [16] | Randomized Controlled Trial (N=50) | • High Comprehension: Both VIC and paper groups had high comprehension scores.• Higher Satisfaction: VIC participants reported greater satisfaction.• Perceived Efficiency: VIC users reported shorter perceived time to complete consent and a higher ability to work independently. |

| Digital Informed Consent App [24] | Mixed-Method Feasibility Study (N=30) | • Usage Time: Participants used the app for 4-15 minutes to provide consent.• Positive Usability: Overall, the app was found to be well-designed and easy to use.• Information Retention: While all participants remembered various study aspects, fewer than half answered all retention questions satisfactorily. |

| Personalized Electronic Informed Consent (eConsent) [21] | Review of Empirical Evidence | • Improved Understanding: Interactive eConsent with hyperlinks to additional content led to higher understanding of information after a 6-month follow-up compared to a standard, non-customizable model.• Administrative Efficiency: eConsent supports more efficient documenting and oversight through automatic audit trails. |

Detailed Methodology: VIC Randomized Controlled Trial

Objective: To evaluate the feasibility of the Virtual Multimedia Interactive Informed Consent (VIC) tool and compare it with traditional paper-based methods in a real-world research setting [16].

1. Trial Design

- A randomized controlled trial was embedded within an ongoing biorepository study (GenEx 2.0).

- Participants were allocated to either the VIC tool on an iPad (intervention arm) or the standard paper consent (control arm) in a 1:1 ratio.

- A computer algorithm using the minimization method ensured balance on demographic characteristics like gender, race, and education.

- The primary outcomes were comprehension and satisfaction, assessed via coordinator-administered questionnaires immediately after the consent process [16].

2. Participants

- Recruitment: Participants with lung disease were recruited from a chest clinic, and healthy individuals were recruited from the community via fliers.

- Inclusion Criteria: Participants were eligible if they spoke English, were older than 21, provided an email address, and were willing to use an iPad. Computer literacy was not required.

- Sample Size: A total of 50 participants were enrolled (25 per arm) [16].

3. Intervention: The VIC Tool

- Theoretical Framework: The tool was designed based on Mayer's cognitive theory of multimedia learning.

- Key Features:

- A virtual coach with text-to-speech translation.

- A comprehensive multimedia library (video clips, animations) to explain risks, benefits, and procedures.

- A non-linear, hierarchical structure allowing participants to navigate sections and drill down for more information.

- Automated quizzes to assess comprehension.

- Electronic signature and secure data recording [16].

4. Data Collection

- Following the consent process and parent study procedures, participants were surveyed about their comprehension and satisfaction.

- The study specifically measured satisfaction, perceived ease of use, and perceived time to complete the process [16].

Visualization of a Digital Informed Consent Implementation Workflow

The diagram below outlines the key phases and decision points for implementing a digital informed consent solution in a research setting.

Research Reagent Solutions: Essential Components for Digital Consent

This table details the key "research reagents"—the core components and platforms—required to develop and implement an effective digital informed consent system.

Table 2: Essential Materials and Tools for Digital Informed Consent Research

| Item | Function in the Research Process |

|---|---|

| Multimedia Learning Theory Framework | Provides the foundational cognitive principles for designing content that minimizes extraneous load and maximizes understanding, as exemplified by Mayer's theory [16]. |

| User-Centered Design (UCD) Protocol | A methodology for involving end-users (patients and researchers) throughout the development process to ensure the final tool is usable, accessible, and meets real-world needs [16] [24]. |

| Randomized Controlled Trial (RCT) Design | The gold-standard methodology for empirically comparing the efficacy (comprehension, satisfaction) of a new digital consent tool against traditional paper-based methods [16]. |

| Interactive eConsent Platform | A configurable digital system (e.g., web-based app) that supports multimedia integration, interactive quizzes, electronic signatures, and secure audit trails [21] [16]. |

| Accessibility and Contrast Checking Tools | Software tools that validate that color contrast ratios meet WCAG guidelines (e.g., 4.5:1 for text), ensuring the tool is accessible to users with visual impairments [22]. |

| Hybrid Consent Protocol | A pre-defined operational plan for offering both digital and paper-based consent options to prevent the exclusion of participants with low digital literacy or specific preferences [21]. |

The integration of multimedia learning principles into research tools, particularly those supporting the informed consent process, represents a significant advancement in ethical research practice. Mayer's Cognitive Theory of Multimedia Learning provides an evidence-based framework for designing materials that promote genuine understanding rather than mere compliance [25]. This approach is especially valuable in clinical and research settings where participant comprehension is ethically paramount yet often inadequately achieved through traditional paper-based methods [17] [16].

This technical support center applies Mayer's principles to create effective troubleshooting guides and FAQs specifically designed for researchers developing multimedia tools for informed consent. By structuring support materials according to how people actually process information, we can enhance both the development process and the ultimate effectiveness of these critical research tools.

Core Principles of Mayer's Theory: A Research Implementation Framework

Mayer's theory rests on three fundamental assumptions about how humans process information, with direct implications for designing research tools and support materials [26] [25]:

- Dual-channel assumption: People have separate channels for processing visual/pictorial material and auditory/verbal material

- Limited-capacity assumption: Each channel has limited capacity for processing information at one time

- Active-processing assumption: Meaningful learning occurs when people engage in active cognitive processing

From these assumptions, Mayer developed 12 specific principles that guide effective multimedia design. The table below summarizes these principles with specific applications to informed consent tool development:

Table: Mayer's 12 Principles of Multimedia Learning Applied to Informed Consent Tools

| Principle | Core Concept | Application to Informed Consent Tools |

|---|---|---|

| Multimedia | Words + pictures > words alone | Combine narration with relevant visuals explaining procedures [26] [25] |

| Coherence | Exclude extraneous material | Remove non-essential graphics/text not directly related to consent concepts [26] |

| Signaling | Highlight essential information | Use cues to emphasize critical risks or procedures [26] |

| Redundancy | Graphics + narration > graphics + narration + text | Avoid identical on-screen text with narration [26] |

| Spatial Contiguity | Place corresponding words/pictures near each other | Position labels close to relevant diagram elements [26] |

| Temporal Contiguity | Present corresponding words/pictures simultaneously | Synchronize animations with narrations [26] |

| Segmenting | Break content into learner-paced segments | Chunk complex study information into manageable parts [26] |

| Pre-training | Provide key concept definitions first | Explain terms like "randomization" before main content [26] |

| Modality | Graphics + narration > graphics + on-screen text | Use spoken explanations for complex visual sequences [26] |

| Voice | Human voice > machine voice | Use friendly human narration rather than synthetic voices [26] |

| Personalization | Conversational style > formal style | Use first-person ("you") and accessible language [26] |

| Image | Speaker image not always necessary | Use talking-head videos sparingly; focus on relevant visuals [26] |

Technical Support: Troubleshooting Guides for Multimedia Consent Tools

Systematic Troubleshooting Approaches

When developing multimedia consent tools, researchers may encounter various technical and comprehension-related challenges. The following troubleshooting approaches provide structured methodologies for identifying and resolving these issues:

Table: Troubleshooting Methodologies for Multimedia Consent Tool Development

| Approach | Best For | Implementation Steps |

|---|---|---|

| Top-Down [27] | Complex systems with multiple components | 1. Start with broad system overview2. Gradually narrow to specific components3. Identify highest-level issue first |

| Bottom-Up [27] | Specific, well-defined problems | 1. Begin with specific problem2. Work upward to higher-level issues3. Focus on immediate symptoms first |

| Divide-and-Conquer [27] | Complex, multi-factorial issues | 1. Divide problem into smaller subproblems2. Solve each subproblem recursively3. Combine solutions to solve original problem |

| Follow-the-Path [27] | Understanding user interaction flows | 1. Trace user path through consent tool2. Identify where comprehension breaks down3. Isolate specific interaction points causing confusion |

Frequently Asked Questions: Technical Implementation

Q: How can we effectively measure comprehension in multimedia consent tools compared to traditional methods?

A: Implement built-in assessment quizzes that test understanding of key concepts [16]. Research shows that multimedia tools with comprehension checks significantly improve understanding compared to paper consent, with one randomized controlled trial demonstrating higher comprehension scores in the multimedia group (VIC tool) compared to traditional paper consent [16]. The assessment should focus on core concepts like study procedures, risks, and voluntary participation.

Q: What technical specifications ensure accessibility in multimedia consent tools?

A: Adhere to WCAG 2.2 Level AA contrast requirements [5] [20]:

- Minimum contrast ratio of 4.5:1 for normal text (7:1 for enhanced contrast)

- Minimum contrast ratio of 3:1 for large text (18pt+/14pt+ bold)

- Text must be at least 18.66px for large text requirements

- Explicitly set text color (fontcolor) to ensure high contrast against background colors

Q: How do we balance multimedia elements without creating cognitive overload?

A: Apply Mayer's Coherence Principle by excluding extraneous material [26]. Research indicates that 60-70% of individuals don't fully understand traditional consent forms, often due to information overload [17] [16]. Use the Segmenting Principle to break complex information into learner-paced chunks, and the Signaling Principle to highlight essential information like risks and key procedures [26].

Q: What approaches work best for different demographic groups, including those with potential cognitive impairments?

A: Implement adaptive presentation strategies based on pre-assessment [17]. The Virtual Multimedia Interactive Informed Consent (VIC) tool, based on Mayer's theory, successfully used a modular approach with techniques to improve understandability for diverse populations [16]. Patients reported less stress and greater sense of control with self-paced multimedia tools [17].

Experimental Protocols & Implementation Framework

Validation Methodology for Multimedia Consent Tools

Table: Key Experimental Metrics for Multimedia Consent Tool Validation

| Metric Category | Specific Measures | Data Collection Methods | Target Outcomes |

|---|---|---|---|

| Comprehension | Immediate recall, Conceptual understanding, Risk awareness [17] [16] | Standardized questionnaires, Teach-back assessment [16] | >25% improvement vs paper consent |

| User Experience | Satisfaction scores, Perceived ease of use, Completion time [16] | Likert scales, System analytics, Time tracking | >90% satisfaction, Reduced completion time |

| Technical Performance | Accessibility compliance, System reliability, Cross-platform functionality | Automated testing, WCAG validation, Device testing | 100% WCAG AA compliance, <1% crash rate |

| Process Efficiency | Staff time required, Question rates, Re-consent needs | Time-motion studies, Question logging, Follow-up assessments | >30% reduction in staff time |

The Researcher's Toolkit: Essential Technical Components

Table: Research Reagent Solutions for Multimedia Consent Tool Development

| Component | Function | Implementation Example | Technical Specifications |

|---|---|---|---|

| Multimedia Content Library | Explain risks, benefits, procedures [16] | Video clips, animations, presentations | MP4/H.264, WebM/VP9, accessible player controls |

| Comprehension Assessment Module | Test understanding of key concepts [16] | Automated quizzes, interactive Q&A | Scoring algorithm, progress tracking, remediation paths |

| Accessibility Compliance Engine | Ensure WCAG 2.2 AA compliance [5] [20] | Color contrast validation, screen reader compatibility | 4.5:1 contrast ratio, keyboard navigation, ARIA labels |

| Multi-platform Delivery System | Consistent experience across devices [16] | Responsive web design, adaptive streaming | HTML5, CSS media queries, cross-browser testing |

| Analytics & Reporting Dashboard | Track usage, comprehension, engagement [16] | User interaction logging, comprehension analytics | GDPR-compliant data collection, real-time reporting |

Implementation Framework: Color and Accessibility Specifications

Approved Color Palette with Contrast Compliance

The following color palette ensures accessibility while maintaining visual consistency across multimedia consent tools. All colors are specified to meet WCAG 2.2 Level AA requirements when used appropriately [28] [20]:

Table: Approved Color Palette with Contrast Applications

| Color Name | Hex Code | RGB Values | Primary Use | Contrast Compliance |

|---|---|---|---|---|

| Google Blue | #4285F4 |

(66,133,244) | Primary actions, interactive elements | 4.57:1 on white (passes AA) |

| Google Red | #EA4335 |

(234,67,53) | Warnings, important alerts | 4.54:1 on white (passes AA) |

| Google Yellow | #FBBC05 |

(251,188,5) | Secondary elements, highlights | 3.14:1 on white (fails AA) |

| Google Green | #34A853 |

(52,168,83) | Success states, confirmations | 4.59:1 on white (passes AA) |

| White | #FFFFFF |

(255,255,255) | Backgrounds, light text on dark | 21:1 on dark (passes AAA) |

| Light Gray | #F1F3F4 |

(241,243,244) | Secondary backgrounds, borders | 1.24:1 on white (fails) |

| Dark Gray | #202124 |

(32,33,36) | Primary text, dark backgrounds | 21:1 on white (passes AAA) |

| Medium Gray | #5F6368 |

(95,99,104) | Secondary text, less important elements | 7.74:1 on white (passes AA) |

Technical Implementation Checklist

- Apply Mayer's Principles in content design and organization

- Implement WCAG 2.2 AA contrast requirements (4.5:1 minimum for normal text)

- Include comprehension assessment modules with automated scoring

- Ensure cross-platform compatibility across devices and browsers

- Provide self-paced segmenting for complex information

- Use human voice narration rather than synthetic voices

- Implement analytics to track comprehension and engagement metrics

- Validate with diverse user groups including those with cognitive impairments

- Compare outcomes against traditional paper-based consent methods

- Document comprehension rates and user satisfaction metrics

This technical support framework provides researchers with evidence-based methodologies for developing, troubleshooting, and validating multimedia consent tools grounded in Mayer's Cognitive Theory of Multimedia Learning. By implementing these structured approaches, research teams can create more effective informed consent processes that genuinely enhance participant understanding while maintaining rigorous technical and accessibility standards.

The digitalization of the informed consent process for medical procedures and research represents a significant advancement in healthcare, aiming to overcome the well-documented challenges of traditional paper-based methods, such as low comprehensibility and lack of customization [2]. Within this context, multimedia tools offer remarkable potential to enhance patient understanding of clinical procedures, potential risks, benefits, and alternative treatments [2]. The foundation for realizing this potential lies in implementing a rigorous User-Centered Design (UCD) process, a systematic approach that involves patients and other stakeholders throughout the development lifecycle to ensure the resulting interactive health technologies are functional, usable, and valuable [29].

This technical support center is framed within a broader thesis on multimedia tools for enhancing informed consent research. It provides researchers, scientists, and drug development professionals with the necessary resources to troubleshoot common technical and methodological challenges encountered when developing and evaluating these digital consent aids. The guidance below is built upon the core UCD principle that a focus on end-users—patients, caregivers, and clinicians—from the very start is not merely beneficial but essential for creating technologies that are effective and promote the intended health outcomes [29].

Key Concepts and Definitions

- User-Centered Design (UCD): An approach for developing applications that incorporates user-centered activities throughout the entire development process, allowing end-users to influence the design to increase ultimate usability [29].

- Interactive Health Technology (IHT): The interaction of an individual (consumer, patient, caregiver, or professional) with a computerized technology to access, monitor, share, or transmit health information [29].

- Informed Consent: The process of a patient or research participant willingly agreeing to a medical procedure or study participation after understanding the risks, benefits, and alternatives [2].

- Usability: The measure of the ease with which a system can be learned and used, including its safety, effectiveness, and efficiency [29].

Frequently Asked Questions (FAQs) & Troubleshooting

A. Methodological and Conceptual Challenges

Q1: Why is it critical to involve patients early in the design of a multimedia consent tool, rather than just testing a final product? A: Early involvement is a fundamental principle of UCD. It ensures that the development team accurately assesses user requirements and gains a deeper understanding of the users' goals, interests, and learning styles. This upfront research helps identify and resolve usability problems before the system is launched, substantially reducing development time and increasing user acceptance and the ultimate quality of the system [29]. Developing technology based on developer-driven needs, rather than those of the intended users, is a common pitfall that leads to poor adoption and effectiveness.

Q2: Our research team lacks expertise in design. How can we effectively recruit patient-users for UCD activities? A: After receiving IRB approval, employ purposive sampling to recruit a representative group of patient-volunteers. The sample should include members of both genders, racial and ethnic minorities, and individuals with physical or cognitive impairments that might affect use (e.g., tremors, blurred vision from medication). Research suggests that involving at least 5 users will expose the majority of usability problems [29]. For a multimedia consent tool, it is also crucial to include participants with varying levels of health literacy and computer experience.

Q3: What are the key UCD principles we should follow for a digital consent project? A: The three guiding principles, as defined by Gould & Lewis, are [29]:

- Focus on users and tasks early and throughout the design process.

- Measure usability empirically through observation and testing.

- Design and test usability iteratively, refining prototypes based on user feedback.

B. Technical and Implementation Challenges

Q4: We are considering using AI to generate or explain consent content. What are the key technical risks? A: While AI-based technologies show great potential, current research indicates they are not yet suitable for use without medical oversight. AI-generated patient information can lack consistent reliability, with risks of providing incomplete or misleading information [2]. Any implementation of AI must include a robust validation and oversight mechanism by qualified healthcare professionals to ensure the information is accurate and complete.

Q5: What are the essential features for a help center or knowledge base that supports a digital consent platform? A: A successful support system should [30]:

- Promote Self-Service: Include a comprehensive FAQ page and troubleshooting guides.

- Ensure a Great User Experience (UX): Provide easy navigation, an obvious search bar, and a visually appealing design that works seamlessly on mobile devices and computers.

- Use Data to Drive Success: Track metrics like resolution times and the types of content users are accessing to identify gaps and areas for improvement.

- Market the Help Center: Ensure users know the resource exists through prominent links and promotion.

Q6: How can we ensure our multimedia consent tool is accessible to users with visual impairments? A: Adhere to WCAG (Web Content Accessibility Guidelines) standards for contrast. The enhanced contrast requirement (Level AAA) mandates a contrast ratio of at least 7:1 for normal text and 4.5:1 for large-scale text [5] [19]. The color palette provided in the "Diagram Specifications" section of this document is designed with these principles in mind.

Experimental Protocols and Workflows

A. Protocol: Applying UCD to the Development of an Interactive Consent Tool

The following protocol is adapted from the development of Pocket PATH, an interactive health technology for lung transplant patients, illustrating the systematic application of UCD [29].

1. Assemble an Interdisciplinary Development Team

- Purpose: To ensure the technology addresses the latest clinical, behavioral, and technical standards.

- Methodology: Form a team that includes at minimum: a principal investigator (e.g., a clinician or senior researcher), a human-computer interaction specialist, a programming engineer, a behavioral scientist, and a relevant medical expert (e.g., a transplant physician). This ensures multiple perspectives are considered during development [29].

2. Assess the Intended Users and Their Tasks

- Purpose: To understand the characteristics, limitations, and goals of the end-users.

- Methodology: Gather information from clinical records, literature, and pre-study interviews. Identify user characteristics that could impact use, such as age, prevalence of symptoms like tremors or blurred vision, individual preferences for information, and level of computer literacy [29]. Observe users performing relevant tasks in their actual environment if possible.

3. Recruit Representative Patients and Conduct Iterative Prototype Testing

- Purpose: To identify and resolve usability problems before launch.

- Methodology: a. Recruit a purposive sample of at least 5 patient-users who represent the diversity of the target population [29]. b. Develop an initial low-fidelity prototype (e.g., wireframes, mock-ups). c. In one-on-one sessions, observe users interacting with the prototype. Ask them to think aloud as they attempt to complete core tasks, such as navigating through consent information or using a "teach-back" function. d. Empirically measure usability by noting task success rates, errors, and time-on-task. e. Analyze the findings, refine the prototype, and repeat the testing cycle until usability goals are met.

B. Workflow Diagram: UCD for Multimedia Consent Tools

The diagram below outlines the logical workflow and iterative nature of the User-Centered Design process as applied to multimedia consent tool development.

Diagram 1: UCD Workflow for Consent Tools

Data Presentation

Table 1: Key Performance Indicators for Evaluating a Support System for Digital Consent Tools

Tracking the right metrics is essential for evaluating the effectiveness of both the digital consent tool itself and the technical support system that underpins it. The following KPIs, derived from help desk and UCD best practices, provide measurable data for continuous improvement [30] [31].

| KPI Category | Specific Metric | Definition & Measurement Method | Target Benchmark |

|---|---|---|---|

| User Comprehension | Knowledge Retention Score | Score on a standardized quiz testing understanding of consent information, administered after using the tool. | >90% correct answers [2] |

| Usability | System Usability Scale (SUS) | A reliable, ten-item scale for measuring subjective usability. Users rate agreement from 1 (Strongly Disagree) to 5 (Strongly Agree). | Score > 68 (considered "good" usability) |

| Support Efficiency | First Contact Resolution (FCR) | The percentage of user support inquiries resolved during the first interaction. Measured via support ticketing system. | >70% [31] |

| Support Efficiency | Average Resolution Time | The average time taken from when a support ticket is logged until it is successfully resolved. | < 24 hours [31] |

| User Satisfaction | Customer Satisfaction (CSAT) Score | The percentage of users who rate their support experience as "Satisfied" or "Very Satisfied" (e.g., on a 1-5 scale). | >90% [31] |

| Tool Effectiveness | Perceived Stress Reduction | User self-reporting on a Likert scale regarding anxiety or stress before and after the digital consent process. | Positive trend (Mixed evidence exists, so tracking is key) [2] |

The Scientist's Toolkit: Research Reagent Solutions

The following table details key methodological components, or "research reagents," essential for conducting a rigorous UCD process in the development of multimedia informed consent tools.

| Item / Solution | Function in the UCD Process | Specification & Application Notes |

|---|---|---|

| Interdisciplinary Team | Provides diverse expertise to identify and resolve issues from clinical, technical, and behavioral perspectives [29]. | Team should include a PI, computer scientist (HCI specialist), behavioral scientist, and relevant medical expert. |

| Low-Fidelity Prototypes | Allows for early and inexpensive testing of core concepts and workflows with users before significant development resources are expended [29]. | Can be paper sketches, wireframes, or clickable mock-ups. Used in initial iterative testing cycles. |

| Usability Testing Protocol | A standardized method for empirically measuring usability by observing real users interacting with the prototype [29]. | Includes a facilitator guide, predefined tasks, and a method for recording user actions, errors, and feedback (think-aloud protocol). |

| Purposive User Sample | A strategically recruited group of test participants that represents the diversity and key characteristics of the target patient population [29]. | Sample of ~5+ users, including variation in age, gender, tech literacy, and any relevant physical/clinical characteristics. |

| Semi-Structured Interview Guide | Used to gather qualitative feedback on user expectations, comprehension, and perceived value of the consent tool, going beyond simple task completion [29]. | Contains open-ended questions to explore user perceptions in depth, complementing quantitative usability metrics. |

| Multimedia Content Library | A repository of approved, patient-friendly media assets (videos, images, animations) used to explain complex medical procedures and concepts within the tool [13]. | Content must be clinically accurate, vetted by medical experts, and designed for low health literacy levels. |

Troubleshooting Guides and FAQs

This technical support center provides practical solutions for researchers implementing multimedia informed consent tools. The guidance is framed within the context of enhancing comprehension, autonomy, and engagement in clinical research.

Self-Directed Informed Consent Kiosks

Self-service kiosks allow potential participants to review consent information independently before engaging with study staff.

Common Technical Issues & Solutions

- Problem: Touchscreen unresponsive or difficult to use.

- Solution: Ensure kiosk software uses large, customizable touch targets. Standard radio buttons and checkboxes are often too small for reliable stylus or finger use [32]. Increase the selection area significantly.

- Problem: Participant reports text is too small to read.

- Solution: Implement a "zoom" or "text size" feature within the kiosk software. Avoid small fonts supplied by standard development toolkits; use larger, simplistic fonts for better readability [32].

- Problem: Low color contrast makes content hard to read.

- Solution: Adhere to WCAG enhanced contrast guidelines (Level AAA). For standard text, ensure a contrast ratio of at least 7:1 between text and background. For large-scale text (approx. 18pt+ or 14pt+bold), a minimum ratio of 4.5:1 is required [5].

- Problem: Kiosk interface feels cluttered or overwhelming.

Frequently Asked Questions

- Q: Can a kiosk-based consent process fully replace a paper consent form?

- A: While patients often report that multimedia systems could replace paper documents [17], regulatory acceptance varies. For FDA-regulated, greater-than-minimal-risk studies, the system must be 21 CFR Part 11 compliant to document legally effective signatures [33]. Always obtain IRB approval for any consent process change.

- Q: What are the main benefits of using a self-directed kiosk?

- A: Patients report a greater sense of control and less stress because they can proceed at their own pace. The use of video and multimedia can make complex information more understandable [17].

Coordinator-Assisted Tablet-Based Consent

This model uses tablets (e.g., iPads) with a study coordinator present to assist and answer questions.

Common Technical Issues & Solutions

- Problem: Participants struggle with navigation or miss key sections.

- Solution: The tool should have a clear, linear workflow with a progress indicator. Features like the Virtual Multimedia Interactive Informed Consent (VIC) tool allow participants to go back and forth through sections and click links for more information [16].

- Problem: How to verify participant comprehension during the process.

- Solution: Integrate automated comprehension quizzes or the teach-back technique directly into the application. This helps emphasize the information presented and allows the coordinator to address misunderstandings immediately [16].

- Problem:

- Solution: For greater-than-minimal-risk studies not FDA-regulated, build an authentication mechanism. Provide a unique code directly to the participant during the consent conversation, which they must enter to proceed [33].

- Problem: Inconsistent user experience across different tablet models or operating systems.

- A: Consider a Bring Your Own Device (BYOD) approach, which leverages patient familiarity with their own devices to minimize learning curves. Alternatively, ensure your solution is deployed in multiple modalities and is cross-platform (e.g., Android/iOS) [34].

Frequently Asked Questions

- Q: Is there evidence that tablet-based consent improves understanding?

- A: Randomized controlled trials have shown that participants using multimedia tools like VIC can have high comprehension scores, with higher satisfaction, perceived ease of use, and a shorter perceived time to complete the process compared to paper consent [16].

- Q: What features are most important in a multimedia consent tool?

- A: Effective tools often combine a user-centered design with Mayer’s cognitive theory of multimedia learning. Key features include a comprehensive multimedia library (video, animations), text-to-speech, comprehension quizzes, and accessibility options [16].

Remote Consent and Electronic Signatures

Remote consent occurs when the study team and participant are not in the same physical location, using paper forms or electronic consent (e-consent) systems [33].

Common Technical Issues & Solutions

- Problem: Choosing the right e-consent system for an FDA-regulated study.

- Solution: For FDA-regulated, greater-than-minimal-risk research requiring documented consent, you must use a Part 11 compliant system like DocuSign. The standard JHU instance of REDCap is not Part 11 compliant and cannot be used for this purpose [33].

- Problem: Participant cannot or will not use an e-signature system.

- Solution: Implement a remote paper consent process. Provide the consent form via email/fax/mail, conduct the consent discussion via phone/video conference, and have the participant sign a hard copy and return it via email/fax/mail [33].

- Problem: Ensuring the person providing e-consent is the correct participant.

- Solution: For greater-than-minimal-risk, non-FDA-regulated studies using REDCap, incorporate a direct authentication mechanism, such as providing a unique code to the participant during the consent conversation [33].

Frequently Asked Questions

- Q: What is the difference between "Remote Consent" and "E-Consent"?

- A: They are distinct concepts. E-Consent refers to using an electronic system (like REDCap or DocuSign) instead of paper. Remote Consent means the consent process occurs when the parties are not physically together. E-consent can be used for in-person or remote consent processes [33].

- Q: Can we start study procedures before a signed consent form is returned?

- A: No study-related procedures may begin until the study team possesses a signed copy of the consent (faxed, emailed, or mailed), unless the IRB has specifically approved a waiver of documentation of consent for select activities [33].

The table below summarizes quantitative data from studies investigating multimedia consent tools.

Table 1: Quantitative Findings from Multimedia Consent Studies

| Study Description | Comprehension & Usability Results | Participant Satisfaction & Feedback |

|---|---|---|

| Randomized Controlled Trial of VIC Tool (n=50) [16] | Both VIC (n=25) and paper (n=25) groups had high comprehension. | VIC participants reported higher satisfaction, higher perceived ease of use, and a shorter perceived time to complete the process. |

| Multimedia Tool in Low-Literacy Gambian Population [15] | Differences in mean scores for 'recall' and 'understanding' between first and second visits were statistically significant (p<0.00001). | 70% of participants reported the multimedia tool was clear and easy to understand. |

| Early Multimedia Prototype (1998) [17] | N/A - Feasibility study | Patients felt the system was useful, less stressful, and provided a greater sense of control. They liked the modular information and found video improved understanding. |

Detailed Methodology: VIC Randomized Controlled Trial

This protocol can be adapted for testing similar multimedia consent tools [16].

- 1. Trial Design: A randomized controlled trial comparing the multimedia tool (intervention) with a standard paper consent (control). The allocation ratio is 1:1.

- 2. Participants & Setting:

- Recruit from the parent study's population (e.g., clinic patients and healthy community volunteers).

- Inclusion Criteria: Speaks the tool's language, meets age requirement, has an email address, willing to use the provided tablet. Computer literacy is not required.

- 3. Randomization: Use a computer-generated randomization sequence with minimization to balance demographic characteristics (e.g., gender, race, education, technology confidence).

- 4. Intervention Arm:

- Participants use the multimedia tool (e.g., VIC on an iPad).